Dot product (original) (raw)

Algebraic operation on coordinate vectors

In mathematics, the dot product or scalar product[note 1] is an algebraic operation that takes two equal-length sequences of numbers (usually coordinate vectors), and returns a single number. In Euclidean geometry, the dot product of the Cartesian coordinates of two vectors is widely used. It is often called the inner product (or rarely the projection product) of Euclidean space, even though it is not the only inner product that can be defined on Euclidean space (see Inner product space for more).

Algebraically, the dot product is the sum of the products of the corresponding entries of the two sequences of numbers. Geometrically, it is the product of the Euclidean magnitudes of the two vectors and the cosine of the angle between them. These definitions are equivalent when using Cartesian coordinates. In modern geometry, Euclidean spaces are often defined by using vector spaces. In this case, the dot product is used for defining lengths (the length of a vector is the square root of the dot product of the vector by itself) and angles (the cosine of the angle between two vectors is the quotient of their dot product by the product of their lengths).

The name "dot product" is derived from the dot operator " · " that is often used to designate this operation;[1] the alternative name "scalar product" emphasizes that the result is a scalar, rather than a vector (as with the vector product in three-dimensional space).

The dot product may be defined algebraically or geometrically. The geometric definition is based on the notions of angle and distance (magnitude) of vectors. The equivalence of these two definitions relies on having a Cartesian coordinate system for Euclidean space.

In modern presentations of Euclidean geometry, the points of space are defined in terms of their Cartesian coordinates, and Euclidean space itself is commonly identified with the real coordinate space R n {\displaystyle \mathbf {R} ^{n}}

Coordinate definition

[edit]

The dot product of two vectors a = [ a 1 , a 2 , ⋯ , a n ] {\displaystyle \mathbf {a} =[a_{1},a_{2},\cdots ,a_{n}]} ![{\displaystyle \mathbf {a} =[a_{1},a_{2},\cdots ,a_{n}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5284f6fd0c1181f08a22db25e7a51668b0621db0)

![{\displaystyle \mathbf {b} =[b_{1},b_{2},\cdots ,b_{n}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6049394efd0b0a5bedeafa4fcbd0fd35a4d8846f)

a ⋅ b = ∑ i = 1 n a i b i = a 1 b 1 + a 2 b 2 + ⋯ + a n b n {\displaystyle \mathbf {a} \cdot \mathbf {b} =\sum _{i=1}^{n}a_{i}b_{i}=a_{1}b_{1}+a_{2}b_{2}+\cdots +a_{n}b_{n}}

where Σ {\displaystyle \Sigma }

![{\displaystyle [1,3,-5]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/34361be3217025716bc493edaf428109cdde996a)

![{\displaystyle [4,-2,-1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1aa56bf9b7ea1fc8fdb00b036c4c246b5f653a9c)

[ 1 , 3 , − 5 ] ⋅ [ 4 , − 2 , − 1 ] = ( 1 × 4 ) + ( 3 × − 2 ) + ( − 5 × − 1 ) = 4 − 6 + 5 = 3 {\displaystyle {\begin{aligned}\ [1,3,-5]\cdot [4,-2,-1]&=(1\times 4)+(3\times -2)+(-5\times -1)\\&=4-6+5\\&=3\end{aligned}}} ![{\displaystyle {\begin{aligned}\ [1,3,-5]\cdot [4,-2,-1]&=(1\times 4)+(3\times -2)+(-5\times -1)\\&=4-6+5\\&=3\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a6f1f0d7669d35eb1220c3256ea458319c80f713)

Likewise, the dot product of the vector [ 1 , 3 , − 5 ] {\displaystyle [1,3,-5]} ![{\displaystyle [1,3,-5]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/34361be3217025716bc493edaf428109cdde996a)

[ 1 , 3 , − 5 ] ⋅ [ 1 , 3 , − 5 ] = ( 1 × 1 ) + ( 3 × 3 ) + ( − 5 × − 5 ) = 1 + 9 + 25 = 35 {\displaystyle {\begin{aligned}\ [1,3,-5]\cdot [1,3,-5]&=(1\times 1)+(3\times 3)+(-5\times -5)\\&=1+9+25\\&=35\end{aligned}}} ![{\displaystyle {\begin{aligned}\ [1,3,-5]\cdot [1,3,-5]&=(1\times 1)+(3\times 3)+(-5\times -5)\\&=1+9+25\\&=35\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9f1e6ff09018948273e2f6375b7d0c6196ee1c23)

If vectors are identified with column vectors, the dot product can also be written as a matrix product

a ⋅ b = a T b , {\displaystyle \mathbf {a} \cdot \mathbf {b} =\mathbf {a} ^{\mathsf {T}}\mathbf {b} ,}

Expressing the above example in this way, a 1 × 3 matrix (row vector) is multiplied by a 3 × 1 matrix (column vector) to get a 1 × 1 matrix that is identified with its unique entry: [ 1 3 − 5 ] [ 4 − 2 − 1 ] = 3 . {\displaystyle {\begin{bmatrix}1&3&-5\end{bmatrix}}{\begin{bmatrix}4\\-2\\-1\end{bmatrix}}=3\,.}

Geometric definition

[edit]

Illustration showing how to find the angle between vectors using the dot product

Calculating bond angles of a symmetrical tetrahedral molecular geometry using a dot product

In Euclidean space, a Euclidean vector is a geometric object that possesses both a magnitude and a direction. A vector can be pictured as an arrow. Its magnitude is its length, and its direction is the direction to which the arrow points. The magnitude of a vector a {\displaystyle \mathbf {a} }

In particular, if the vectors a {\displaystyle \mathbf {a} }

a ⋅ b = 0. {\displaystyle \mathbf {a} \cdot \mathbf {b} =0.}

Scalar projection and first properties

[edit]

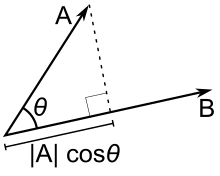

Scalar projection

The scalar projection (or scalar component) of a Euclidean vector a {\displaystyle \mathbf {a} }

In terms of the geometric definition of the dot product, this can be rewritten as a b = a ⋅ b ^ , {\displaystyle a_{b}=\mathbf {a} \cdot {\widehat {\mathbf {b} }},}

Distributive law for the dot product

The dot product is thus characterized geometrically by[5] a ⋅ b = a b ‖ b ‖ = b a ‖ a ‖ . {\displaystyle \mathbf {a} \cdot \mathbf {b} =a_{b}\left\|\mathbf {b} \right\|=b_{a}\left\|\mathbf {a} \right\|.}

These properties may be summarized by saying that the dot product is a bilinear form. Moreover, this bilinear form is positive definite, which means that a ⋅ a {\displaystyle \mathbf {a} \cdot \mathbf {a} }

Equivalence of the definitions

[edit]

If e 1 , ⋯ , e n {\displaystyle \mathbf {e} _{1},\cdots ,\mathbf {e} _{n}}

![{\displaystyle {\begin{aligned}\mathbf {a} &=[a_{1},\dots ,a_{n}]=\sum _{i}a_{i}\mathbf {e} _{i}\\\mathbf {b} &=[b_{1},\dots ,b_{n}]=\sum _{i}b_{i}\mathbf {e} _{i}.\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b154ac2bb09512c81d917db83c273055c093571f)

Vector components in an orthonormal basis

Also, by the geometric definition, for any vector e i {\displaystyle \mathbf {e} _{i}}

Now applying the distributivity of the geometric version of the dot product gives a ⋅ b = a ⋅ ∑ i b i e i = ∑ i b i ( a ⋅ e i ) = ∑ i b i a i = ∑ i a i b i , {\displaystyle \mathbf {a} \cdot \mathbf {b} =\mathbf {a} \cdot \sum _{i}b_{i}\mathbf {e} _{i}=\sum _{i}b_{i}(\mathbf {a} \cdot \mathbf {e} _{i})=\sum _{i}b_{i}a_{i}=\sum _{i}a_{i}b_{i},}

The dot product fulfills the following properties if a {\displaystyle \mathbf {a} }

a ⋅ b = b ⋅ a , {\displaystyle \mathbf {a} \cdot \mathbf {b} =\mathbf {b} \cdot \mathbf {a} ,}

Distributive over vector addition

a ⋅ ( b + c ) = a ⋅ b + a ⋅ c . {\displaystyle \mathbf {a} \cdot (\mathbf {b} +\mathbf {c} )=\mathbf {a} \cdot \mathbf {b} +\mathbf {a} \cdot \mathbf {c} .}

a ⋅ ( r b + c ) = r ( a ⋅ b ) + ( a ⋅ c ) . {\displaystyle \mathbf {a} \cdot (r\mathbf {b} +\mathbf {c} )=r(\mathbf {a} \cdot \mathbf {b} )+(\mathbf {a} \cdot \mathbf {c} ).}

( c 1 a ) ⋅ ( c 2 b ) = c 1 c 2 ( a ⋅ b ) . {\displaystyle (c_{1}\mathbf {a} )\cdot (c_{2}\mathbf {b} )=c_{1}c_{2}(\mathbf {a} \cdot \mathbf {b} ).}

Not associative

because the dot product between a scalar a ⋅ b {\displaystyle \mathbf {a} \cdot \mathbf {b} }

Two non-zero vectors a {\displaystyle \mathbf {a} }

No cancellation

Unlike multiplication of ordinary numbers, where if a b = a c {\displaystyle ab=ac}

If a {\displaystyle \mathbf {a} }

Application to the law of cosines

[edit]

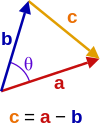

Triangle with vector edges a and b, separated by angle θ.

Given two vectors a {\displaystyle {\color {red}\mathbf {a} }}

c ⋅ c = ( a − b ) ⋅ ( a − b ) = a ⋅ a − a ⋅ b − b ⋅ a + b ⋅ b = a 2 − a ⋅ b − a ⋅ b + b 2 = a 2 − 2 a ⋅ b + b 2 c 2 = a 2 + b 2 − 2 a b cos θ {\displaystyle {\begin{aligned}\mathbf {\color {orange}c} \cdot \mathbf {\color {orange}c} &=(\mathbf {\color {red}a} -\mathbf {\color {blue}b} )\cdot (\mathbf {\color {red}a} -\mathbf {\color {blue}b} )\\&=\mathbf {\color {red}a} \cdot \mathbf {\color {red}a} -\mathbf {\color {red}a} \cdot \mathbf {\color {blue}b} -\mathbf {\color {blue}b} \cdot \mathbf {\color {red}a} +\mathbf {\color {blue}b} \cdot \mathbf {\color {blue}b} \\&={\color {red}a}^{2}-\mathbf {\color {red}a} \cdot \mathbf {\color {blue}b} -\mathbf {\color {red}a} \cdot \mathbf {\color {blue}b} +{\color {blue}b}^{2}\\&={\color {red}a}^{2}-2\mathbf {\color {red}a} \cdot \mathbf {\color {blue}b} +{\color {blue}b}^{2}\\{\color {orange}c}^{2}&={\color {red}a}^{2}+{\color {blue}b}^{2}-2{\color {red}a}{\color {blue}b}\cos \mathbf {\color {purple}\theta } \\\end{aligned}}}

which is the law of cosines.

There are two ternary operations involving dot product and cross product.

The scalar triple product of three vectors is defined as a ⋅ ( b × c ) = b ⋅ ( c × a ) = c ⋅ ( a × b ) . {\displaystyle \mathbf {a} \cdot (\mathbf {b} \times \mathbf {c} )=\mathbf {b} \cdot (\mathbf {c} \times \mathbf {a} )=\mathbf {c} \cdot (\mathbf {a} \times \mathbf {b} ).}

The vector triple product is defined by[2][3] a × ( b × c ) = ( a ⋅ c ) b − ( a ⋅ b ) c . {\displaystyle \mathbf {a} \times (\mathbf {b} \times \mathbf {c} )=(\mathbf {a} \cdot \mathbf {c} )\,\mathbf {b} -(\mathbf {a} \cdot \mathbf {b} )\,\mathbf {c} .}

In physics, the dot product takes two vectors and returns a scalar quantity. It is also known as the "scalar product". The dot product of two vectors can be defined as the product of the magnitudes of the two vectors and the cosine of the angle between the two vectors. Thus, a ⋅ b = | a | | b | cos θ {\displaystyle \mathbf {a} \cdot \mathbf {b} =|\mathbf {a} |\,|\mathbf {b} |\cos \theta }

- Mechanical work is the dot product of force and displacement vectors,

- Power is the dot product of force and velocity.

For vectors with complex entries, using the given definition of the dot product would lead to quite different properties. For instance, the dot product of a vector with itself could be zero without the vector being the zero vector (e.g. this would happen with the vector a = [ 1 i ] {\displaystyle \mathbf {a} =[1\ i]} ![{\displaystyle \mathbf {a} =[1\ i]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f68c1771ca29419b14a5e2334f03687f6e2670d6)

a ⋅ b = b H a . {\displaystyle \mathbf {a} \cdot \mathbf {b} =\mathbf {b} ^{\mathsf {H}}\mathbf {a} .}

In the case of vectors with real components, this definition is the same as in the real case. The dot product of any vector with itself is a non-negative real number, and it is nonzero except for the zero vector. However, the complex dot product is sesquilinear rather than bilinear, as it is conjugate linear and not linear in a {\displaystyle \mathbf {a} }

The complex dot product leads to the notions of Hermitian forms and general inner product spaces, which are widely used in mathematics and physics.

The self dot product of a complex vector a ⋅ a = a H a {\displaystyle \mathbf {a} \cdot \mathbf {a} =\mathbf {a} ^{\mathsf {H}}\mathbf {a} }

The inner product generalizes the dot product to abstract vector spaces over a field of scalars, being either the field of real numbers R {\displaystyle \mathbb {R} }

The inner product of two vectors over the field of complex numbers is, in general, a complex number, and is sesquilinear instead of bilinear. An inner product space is a normed vector space, and the inner product of a vector with itself is real and positive-definite.

The dot product is defined for vectors that have a finite number of entries. Thus these vectors can be regarded as discrete functions: a length- n {\displaystyle n}

This notion can be generalized to continuous functions: just as the inner product on vectors uses a sum over corresponding components, the inner product on functions is defined as an integral over some interval [a, _b_]:[2]

⟨ u , v ⟩ = ∫ a b u ( x ) v ( x ) d x . {\displaystyle \left\langle u,v\right\rangle =\int _{a}^{b}u(x)v(x)\,dx.}

Generalized further to complex functions ψ ( x ) {\displaystyle \psi (x)}

⟨ ψ , χ ⟩ = ∫ a b ψ ( x ) χ ( x ) ¯ d x . {\displaystyle \left\langle \psi ,\chi \right\rangle =\int _{a}^{b}\psi (x){\overline {\chi (x)}}\,dx.}

Inner products can have a weight function (i.e., a function which weights each term of the inner product with a value). Explicitly, the inner product of functions u ( x ) {\displaystyle u(x)}

⟨ u , v ⟩ r = ∫ a b r ( x ) u ( x ) v ( x ) d x . {\displaystyle \left\langle u,v\right\rangle _{r}=\int _{a}^{b}r(x)u(x)v(x)\,dx.}

Dyadics and matrices

[edit]

A double-dot product for matrices is the Frobenius inner product, which is analogous to the dot product on vectors. It is defined as the sum of the products of the corresponding components of two matrices A {\displaystyle \mathbf {A} }

A : B = ∑ i ∑ j A i j B i j ¯ = tr ( B H A ) = tr ( A B H ) . {\displaystyle \mathbf {A} :\mathbf {B} =\sum _{i}\sum _{j}A_{ij}{\overline {B_{ij}}}=\operatorname {tr} (\mathbf {B} ^{\mathsf {H}}\mathbf {A} )=\operatorname {tr} (\mathbf {A} \mathbf {B} ^{\mathsf {H}}).}

Writing a matrix as a dyadic, we can define a different double-dot product (see Dyadics § Product of dyadic and dyadic) however it is not an inner product.

The inner product between a tensor of order n {\displaystyle n}

The straightforward algorithm for calculating a floating-point dot product of vectors can suffer from catastrophic cancellation. To avoid this, approaches such as the Kahan summation algorithm are used.

A dot product function is included in:

BLAS level 1 real

SDOT,DDOT; complexCDOTU,ZDOTU = X^T * Y,CDOTC,ZDOTC = X^H * YFortran as

dot_product(A,B)orsum(conjg(A) * B)Julia as

A' * Bor standard library LinearAlgebra asdot(A, B)R (programming language) as

sum(A * B)for vectors or, more generally for matrices, asA %*% BMatlab as

A' * Borconj(transpose(A)) * Borsum(conj(A) .* B)ordot(A, B)Python (package NumPy) as

np.matmul(A, B)ornp.dot(A, B)ornp.inner(A, B)GNU Octave as

sum(conj(X) .* Y, dim), and similar code as MatlabIntel oneAPI Math Kernel Library real p?dot

dot = sub(x)'*sub(y); complex p?dotcdotc = conjg(sub(x)')*sub(y)Euclidean norm, the square-root of the self dot product

^ The term scalar product means literally "product with a scalar as a result". It is also used sometimes for other symmetric bilinear forms, for example in a pseudo-Euclidean space. Not to be confused with scalar multiplication.

^ a b "Dot Product". www.mathsisfun.com. Retrieved 2020-09-06.

^ a b c d e f S. Lipschutz; M. Lipson (2009). Linear Algebra (Schaum's Outlines) (4th ed.). McGraw Hill. ISBN 978-0-07-154352-1.

^ a b c M.R. Spiegel; S. Lipschutz; D. Spellman (2009). Vector Analysis (Schaum's Outlines) (2nd ed.). McGraw Hill. ISBN 978-0-07-161545-7.

^ A I Borisenko; I E Taparov (1968). Vector and tensor analysis with applications. Translated by Richard Silverman. Dover. p. 14.

^ Arfken, G. B.; Weber, H. J. (2000). Mathematical Methods for Physicists (5th ed.). Boston, MA: Academic Press. pp. 14–15. ISBN 978-0-12-059825-0.

^ Nykamp, Duane. "The dot product". Math Insight. Retrieved September 6, 2020.

^ Weisstein, Eric W. "Dot Product." From MathWorld--A Wolfram Web Resource. http://mathworld.wolfram.com/DotProduct.html

^ T. Banchoff; J. Wermer (1983). Linear Algebra Through Geometry. Springer Science & Business Media. p. 12. ISBN 978-1-4684-0161-5.

^ A. Bedford; Wallace L. Fowler (2008). Engineering Mechanics: Statics (5th ed.). Prentice Hall. p. 60. ISBN 978-0-13-612915-8.

^ K.F. Riley; M.P. Hobson; S.J. Bence (2010). Mathematical methods for physics and engineering (3rd ed.). Cambridge University Press. ISBN 978-0-521-86153-3.

^ M. Mansfield; C. O'Sullivan (2011). Understanding Physics (4th ed.). John Wiley & Sons. ISBN 978-0-47-0746370.

^ Berberian, Sterling K. (2014) [1992]. Linear Algebra. Dover. p. 287. ISBN 978-0-486-78055-9.

- "Inner product", Encyclopedia of Mathematics, EMS Press, 2001 [1994]

- Explanation of dot product including with complex vectors

- "Dot Product" by Bruce Torrence, Wolfram Demonstrations Project, 2007.