AutoClass@IJM: a powerful tool for Bayesian classification of heterogeneous data in biology (original) (raw)

Abstract

Recently, several theoretical and applied studies have shown that unsupervised Bayesian classification systems are of particular relevance for biological studies. However, these systems have not yet fully reached the biological community mainly because there are few freely available dedicated computer programs, and Bayesian clustering algorithms are known to be time consuming, which limits their usefulness when using personal computers. To overcome these limitations, we developed AutoClass@IJM, a computational resource with a web interface to AutoClass, a powerful unsupervised Bayesian classification system developed by the Ames Research Center at N.A.S.A. AutoClass has many powerful features with broad applications in biological sciences: (i) it determines the number of classes automatically, (ii) it allows the user to mix discrete and real valued data, (iii) it handles missing values. End users upload their data sets through our web interface; computations are then queued in our cluster server. When the clustering is completed, an URL to the results is sent back to the user by e-mail. AutoClass@IJM is freely available at: http://ytat2.ijm.univ-paris-diderot.fr/AutoclassAtIJM.html.

INTRODUCTION

High throughput experiments, such as gene expression microarrays in life sciences, result in very large data sets. In the process of analyzing such large data sets, one of the first steps most often used is to subdivide them into smaller groups of items sharing a number of common traits. Thus, clustering is frequently critical in the analysis of those data sets.

Several clustering algorithms have been proposed, including hierarchical clustering, _k_-means and S.O.M. as well as several enhancements of these algorithms (1–5). Several theoretical and applied studies have shown that unsupervised Bayesian classification systems are of particular relevance for biological studies (6–15). Therefore, these clustering algorithms are now increasingly being used in biological studies.

AutoClass is a general purpose clustering algorithm developed by the Bayesian Learning Group at the N.A.S.A. Ames Research Center since the 1980s (16,17). AutoClass is an unsupervised Bayesian classification system based upon the finite mixture model supplemented by a Bayesian method and an Expectation–Maximization algorithm for determining the optimal classes. AutoClass uses a maximum likelihood to find the class description that best predicts the data (a summary of AutoClass algorithm is presented in Supplementary Figure 1). Similar approaches have been developed using infinite mixture models and Gibbs sampling for parameters estimation (6). Our 3-year experience, based on a collaboration between a bioinformatics group and wet lab, and supported by validation of the algorithm using both simulated (see Supplementary Data) and experimental data (see below), persuaded us that AutoClass has many powerful characteristics well suited for biological data sets: (i) the user does not need to specify the number of classes, which makes this algorithm very attractive for overcoming the difficult problem of cutting hierarchical trees and selecting the correct number of clusters, (ii) AutoClass allows the user to mix heterogeneous data (discrete and real-valued) and (iii) AutoClass is able to handle missing values. Moreover, it has been designed for handling large dataset. AutoClass has been used for many years in different fields (astrophysics, economic, etc.), but by very few groups in biology (18–24).

However, as previously reported (7,10), Bayesian clustering algorithms suffer a significant decrease in computing performance as the data sets size becomes very large. End users are therefore faced with the problem of time limitation when using their personal computers. Moreover, large data sets produced by high-throughput data acquisition dramatically increase this ‘need for computation time’.

To our knowledge, very few web servers implement Bayesian clustering algorithms (11,25) and none of them implement as many characteristics as AutoClass.

To overcome these limitations, we make available, free of charge to the academic community, computational resources and we developed AutoClass@IJM, a web interface dedicated to the AutoClass algorithm.

IMPLEMENTATION

We implemented AutoClass-c, version 3.3.4 on a Unix system. The cluster server is composed of 18 blades (64bits bi-processors (uni- or dual-core), 2Go RAM) summing up to a total of 64 CPU units, which offers end users a high computer power. OpenPBS (Torque/Maui) is used to schedule the queued jobs.

WEB APPLICATION DESCRIPTION

Using AutoClass@IJM requires two steps: (i) preparing the data and (ii) submitting data files (with optional modifications of default clustering parameters). An URL to the results is sent back to the user by e-mail. The return time can vary from minutes/hours to days depending on the size of data set and the cluster load.

PREPARING DATA FILES

AutoClass can handle three different types of data: (i) singly bounded real numbers (Real Scalar as named by AutoClass), such as length, weight, etc., (ii) real numbers distributed on the two sides of an origin (Real location), such as Cartesian coordinates (in this case, the origin is 0.0), microarray log ratio, elevation (where sea level is the origin), etc. and (iii) discrete data: any qualitative data, such as chromosome number, phenotype, eyes color, etc. For each type of data, the web interface provides a specific input field.

- The user must prepare a different text file for each type of data he wants to classify.

- Each file must be tab-delimited and contain at least two columns: the first one with the ID (shorter than 30 characters) of the elements to cluster (such as gene names, etc.) and the other columns with the data (columns with a unique value for all lines are prohibited and must be removed). Each file may contain a header line. AutoClass can handle an ‘unlimited’ number of lines and a maximum of 999 columns (default setting of AutoClass; the maximum number of columns may be increased upon user's request).

- In each file, elements with the same ID will be detected and automatically processed as a single entry with: (i) the mean value for numerical data, (ii) the last value for discrete data.

- The same ID in different files represents the same element.

- Missing values are allowed (blank fields in the data file).

We provide two examples on the web interface (data sets and result files) as illustrative material: one data set with real valued data, and one with heterogeneous data (discrete and real valued data).

SUBMITTING DATA FILES

The user must provide an e-mail address and upload his data files in the appropriate fields. AutoClass uses several parameters: we provide an optimized default set. The default parameters are AutoClass defaults except for the ‘max_n_cycles’ parameters (the maximum number of cycles). AutoClass choose the best among 100 classifications. Each classification is performed as a recursive process: a classification stops if the convergence criteria are met or if the maximum number of cycles is reached (see Supplementary Figure 1). Gene expression data are especially difficult to cluster for they are very noisy. Therefore, AutoClass default maximum number of cycle (200) is reached too often according to our experience. We thus decided to set this parameter to 1000 in order for most classifications to converge before the maximum number of cycle is reached.

However, the user may change the ‘error’ parameter. This error is relative (i.e. the ratio of the error to the value) for real scalars, and it is a constant for real location values.

Each analysis is submitted as a single job. Once submitted, the jobs are queued, and when the job starts running, a first e-mail is send to the user.

ERROR PARAMETER

The error parameter applies to the inputted data. As quoted in the AutoClass documentation files:

The fundamental question in all of this is: “**to what extent do you believe the numbers that are to be given to AutoClass?**” AutoClass will run quite happily with whatever it is given. It is up to the user to decide what is meaningful and what is not.

For practical purposes: (i) when dealing with ‘real scalars’ data type, the error parameter is expressed as percent of the value and should obviously be less than 1 (i.e. <100%). (ii) when dealing with ‘real location’ data type, the error parameter is a constant value.

If the error parameter entered by user is too large with respect to the data, the error message generated by AutoClass is interpreted and an e-mail is sent to the user with AutoClass log file attached.

OUTPUT DATA

AutoClass computes classes using all the inputted data. After completion of the job, a single zip archive is generated containing: (i) a tab-delimited file that associates each ID with the index of its class, (ii) two CDT files to read the results in JavaTreeview-like software (26) (if input data are exclusively numerical, otherwise use your favorite spreadsheet to read the file): one contains the experimental data and the probabilities for each item to belong to different classes; the second contains only the experimental data (to help visual identification of classes, blank lines are introduced between classes in the CDT files) (iii) a log file from AutoClass. A second e-mail which contains an URL to the zip archive is then sent for the user to upload his results.

Early development of AutoClass@IJM used our own yeast microarrays (and yeast data from public databases). Therefore, when the IDs are yeast standard ORF names according to the Saccharomyces Genome Database (SGD) nomenclature (27), the CDT output files will be annotated with SGD ORF descriptions. Otherwise, the CDT output files contain only the initial IDs.

SOME BENEFITS OF USING OF BAYESIAN CLASSIFICATION: AN EXAMPLE ON MICROARRAY DATA

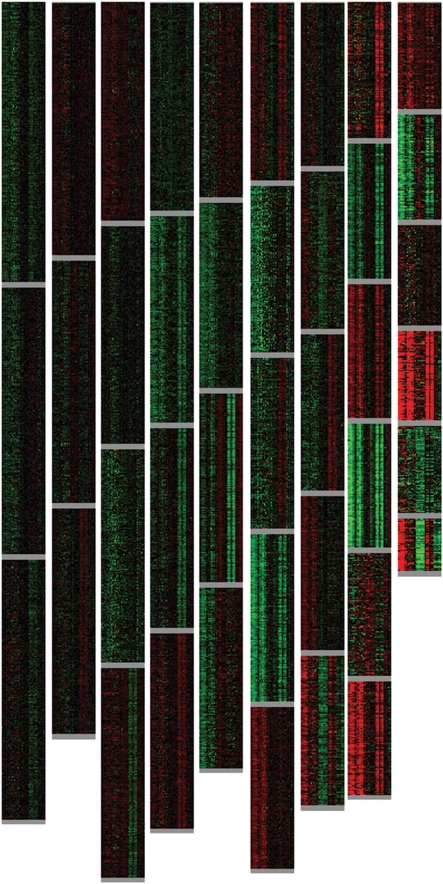

We provide a typical example of classification in Figure 1. The entire clustering of the dataset from Yoshimoto et al. (28) using AutoClass shows that homogeneous classes were computed. The original paper emphasized on the characterization of yeast genes responsive to calcium in a calcineurin-dependent pathway and thus to better characterize the Crz1p-binding sites. Using AutoClass, we were able to identify classes of Crz1 responsive genes, from which the Crz1p DNA-binding consensus sequence [AC][AC]GCC[AT]C was extracted using MEME (29) (_E_-values from 10−8 to 10−13). We also characterized other classes of genes of interest when dealing with calcium and sodium response in yeast such as a class of genes strongly repressed by both calcium and sodium. Analysis of the promoter region of these genes in this class led us to find the PAC and RRPE-binding sites described by Beer and Tavazoie (30) (_E_-value = 4.3 × 10−225). Interestingly, these genes do not belong to the ‘ribosome biogenesis regulon’ described by these authors. We are currently investigating the mechanisms of regulation of this group of genes. This group of gene was not discussed in Yoshimotos's paper (28), and is a good example of the power of Bayesian clustering as a discovery tool.

Figure 1.

Overview of JavaTreeView output from AutoClass clustering of representative yeast microarray data (6300 rows, 35 columns; Gene Expression Omnibus database at NCBI: GSE3456). To help visual identification of classes, blank lines are introduced between classes.

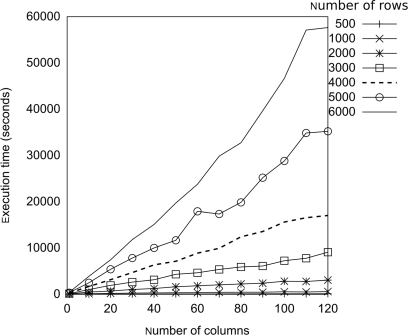

EXECUTION TIME

Since Bayesian classification is demanding in computation time, it is worthwhile for an end-user to know the time required for a given analysis. Such estimation is difficult due to the numerous parameters involved: (i) computer specifications, such as hardware, task scheduling, load balancing of the cluster and (ii) the underlying structure of the data. In order to provide a estimation, we used several published data sets to generate two large compendiums of data: 6000 rows and 120 columns. Each data set was segmented into subsets of increasing size (from 500 rows to 6000 and from 5 columns to 120). Each subset was classified three times and we report in Figure 2 the mean computation time (in seconds) required by AutoClass on our system.

Figure 2.

Execution time (in seconds) of AutoClass for various data set sizes. Horizontal axis: Number of columns of the data set; vertical axis: mean execution time (in seconds—see text). Each curve represents a different number of rows in the data set.

Typically, the data set of 500 rows and one column was classified in 16 s, the data set of 6000 rows and 10 columns was classified in 1 h and the entire data set (6000 rows and 120 columns) was classified in about 16 h. This illustrates the difficulty an end-user might encounter when using his own personal computer for clustering, and is for us a strong motivation to make appropriate computational resources with an easy-to-use web interface available to the community.

DISCUSSION AND CONCLUSION

High throughput experiments generating large data sets have recently pinpointed the need for biologists to have access to computational resources dedicated to the clustering of their data. These data sets may be heterogeneous in nature (discrete and real values) and often have missing values. Since Bayesian clustering algorithms are now recognized as highly valuable alternative to standard clustering algorithms, we anticipate that making such algorithm available for use may provide much added-value through the possibility to classify and analyze complex aggregated data sets in a single process, a situation often found when dealing with biological data. We show in this article that the general drawback of Bayesian algorithms in terms of computational cost may be as high as tens of hours for ‘medium size’ data sets (6000 × 120 matrices) and this computation time may rise when analyzing data from e.g. human transcriptome where the expression patterns of more than 35 000 rows is clustered. The figures show that the required computation time may rapidly be out of range when using a personal computer, a standard situation in many biology labs.

We developed and offer the academic community a server dedicated to the well-validated Bayesian clustering algorithm, AutoClass. Our web server provides a high computer power to end-users and is an integrated tool to aggregate and classify in a single process heterogeneous data. Data files are simple tab-delimited text files that can easily be generated by the user from raw data.

As data sets size continuously increases, future developments will focus on implementing (i) tools that allow the user to supervise his running jobs and (ii) the parallel version of AutoClass (31).

SUPPLEMENTARY DATA

Supplementary Data are available at NAR Online.

FUNDING

French MESR grant-in-aid (to F.A.). Funding for open access charge: CNRS UMR7592 – Université Paris Diderot.

Conflict of interest statement. None declared.

Supplementary Material

[Supplementary Data]

REFERENCES

- 1.Eisen MB, Spellman PT, Brown PO, Botstein D. Cluster analysis and display of genome-wide expression patterns. Proc. Natl Acad. Sci. USA. 1998;95:14863–14868. doi: 10.1073/pnas.95.25.14863. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 2.Gollub J, Sherlock G. Clustering microarray data. Methods Enzymol. 2006;411:194–213. doi: 10.1016/S0076-6879(06)11010-1. [DOI] [PubMed] [Google Scholar]

- 3.De Hoon MJ, Imoto S, Nolan J, Miyano S. Open source clustering software. Bioinformatics. 2004;20:1453–1454. doi: 10.1093/bioinformatics/bth078. [DOI] [PubMed] [Google Scholar]

- 4.Dembele D, Kastner P. Fuzzy c-means method for clustering microarray data. Bioinformatics. 2003;19:973–980. doi: 10.1093/bioinformatics/btg119. [DOI] [PubMed] [Google Scholar]

- 5.Kraj P, Sharma A, Garge N, Podolsky R, Mcindoe RA. Parakmeans: Implementation of a parallelized k-means algorithm suitable for general laboratory use. BMC Bioinformatics. 2008;9:200. doi: 10.1186/1471-2105-9-200. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 6.Medvedovic M, Sivaganesan S. Bayesian infinite mixture model based clustering of gene expression profiles. Bioinformatics. 2002;18:1194–1206. doi: 10.1093/bioinformatics/18.9.1194. [DOI] [PubMed] [Google Scholar]

- 7.Medvedovic M, Yeung KY, Bumgarner RE. Bayesian mixture model based clustering of replicated microarray data. Bioinformatics. 2004;20:1222–1232. doi: 10.1093/bioinformatics/bth068. [DOI] [PubMed] [Google Scholar]

- 8.Yeung KY, Fraley C, Murua A, Raftery AE, Ruzzo WL. Model-based clustering and data transformations for gene expression data. Bioinformatics. 2001;17:977–987. doi: 10.1093/bioinformatics/17.10.977. [DOI] [PubMed] [Google Scholar]

- 9.Ng SK, Mclachlan GJ, Wang K, Ben-tovim Jones L, Ng SW. A mixture model with random-effects components for clustering correlated gene-expression profiles. Bioinformatics. 2006;22:1745–1752. doi: 10.1093/bioinformatics/btl165. [DOI] [PubMed] [Google Scholar]

- 10.Qin ZS. Clustering microarray gene expression data using weighted chinese restaurant process. Bioinformatics. 2006;22:1988–1997. doi: 10.1093/bioinformatics/btl284. [DOI] [PubMed] [Google Scholar]

- 11.Xiang Z, Qin ZS, He Y. Crcview: a web server for analyzing and visualizing microarray gene expression data using model-based clustering. Bioinformatics. 2007;23:1843–1845. doi: 10.1093/bioinformatics/btm238. [DOI] [PubMed] [Google Scholar]

- 12.Fraley C, Raftery AE. How many clusters? Which clustering method? Answers via model-based cluster analysis. Comput. J. 1998;41:578–588. [Google Scholar]

- 13.Haughton D, Legrand P, Woolford S. Review of three latent class cluster analysis packages: latent gold, polca, and mclust. Am. Statistician. 2009;63:81–91. [Google Scholar]

- 14.Tadesse MG, Sha N, Vannucci M. Bayesian variable selection in clustering high-dimensional data. J. Am. Stat. Assoc. 2005;100:602–617. [Google Scholar]

- 15.Baladandayuthapani V, Mallick BK, Hong MY, Lupton JR, Turner ND, Carroll RJ. Bayesian hierarchical spatially correlated functional data analysis with application to colon carcinogenesis. Biometrics. 2008;64:64–73. doi: 10.1111/j.1541-0420.2007.00846.x. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 16.Cheeseman P, Kelly J, Self M, Stutz J, Taylor W, Freeman D. AutoClass: A Bayesian Classification System; San Francisco: Morgan Kaufmann Publishers; 1988. in Proceedings of the Fifth International Conference on Machine Learning. [Google Scholar]

- 17.Cheeseman P, Stutz J. Bayesian Classification (AutoClass): Theory and Results. In: Fayyad U, Piatelsky-Shapiro G, Smyth P, Uthurusamy R, editors. Advances in Knowledge Discovery and Data Mining. Cambridge: AAAI Press/MIT Press; 1996. [Google Scholar]

- 18.Moler EJ, Radisky DC, Mian IS. Integrating naive Bayes models and external knowledge to examine copper and iron homeostasis in S. Cerevisiae. Physiol. Genomics. 2000;4:127–135. doi: 10.1152/physiolgenomics.2000.4.2.127. [DOI] [PubMed] [Google Scholar]

- 19.Chow ML, Moler EJ, Mian IS. Identifying marker genes in transcription profiling data using a mixture of feature relevance experts. Physiol. Genomics. 2001;5:99–111. doi: 10.1152/physiolgenomics.2001.5.2.99. [DOI] [PubMed] [Google Scholar]

- 20.Semeiks JR, Grate LR, Mian IS. Text-based analysis of genes, proteins, aging, and cancer. Mech. Ageing Dev. 2005;126:193–208. doi: 10.1016/j.mad.2004.09.028. [DOI] [PubMed] [Google Scholar]

- 21.Semeiks JR, Rizki A, Bissell MJ, Mian IS. Ensemble attribute profile clustering: discovering and characterizing groups of genes with similar patterns of biological features. BMC Bioinformatics. 2006;7:147. doi: 10.1186/1471-2105-7-147. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 22.Hunter L, States D. Bayesian classification of protein structure. IEEE Intell. Sys. 1992;7:67–75. [Google Scholar]

- 23.Okada Y, Sahara T, Mitsubayashi H, Ohgiya S, Nagashima T. Knowledge-assisted recognition of cluster boundaries in gene expression data. Artif. Intell. Med. 2005;35:171–183. doi: 10.1016/j.artmed.2005.02.007. [DOI] [PubMed] [Google Scholar]

- 24.Crook AC, Baddeley R, Osorio D. Identifying the structure in cuttlefish visual signals. Philos. Trans. R. Soc. Lond. B. Biol. Sci. 2002;357:1617–1624. doi: 10.1098/rstb.2002.1070. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 25.Rasmussen MD, Deshpande MS, Karypis G, Johnson J, Crow JA, Retzel EF. Wcluto: a web-enabled clustering toolkit. Plant Physiol. 2003;133:510–516. doi: 10.1104/pp.103.024885. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 26.Saldanha AJ. Java Treeview – extensible visualization of microarray data. Bioinformatics. 2004;20:3246–3248. doi: 10.1093/bioinformatics/bth349. [DOI] [PubMed] [Google Scholar]

- 27.Weng S, Dong Q, Balakrishnan R, Christie K, Costanzo M, Dolinski K, Dwight SS, Engel S, Fisk DG, Hong E, et al. Saccharomyces Genome Database (SGD) provides biochemical and structural information for budding yeast proteins. Nucleic Acids Res. 2003;31:216–218. doi: 10.1093/nar/gkg054. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 28.Yoshimoto H, Saltsman K, Gasch AP, Li HX, Ogawa N, Botstein D, Brown PO, Cyert MS. Genome-wide analysis of gene expression regulated by the calcineurin/Crz1p signaling pathway in Saccharomyces Cerevisiae. J. Biol. Chem. 2002;277:31079–31088. doi: 10.1074/jbc.M202718200. [DOI] [PubMed] [Google Scholar]

- 29.Bailey TL, Williams N, Misleh C, Li WW. MEME: discovering and analyzing DNA and protein sequence motifs. Nucleic Acids Res. 2006;34:W369–W373. doi: 10.1093/nar/gkl198. [DOI] [PMC free article] [PubMed] [Google Scholar]

- 30.Beer MA, Tavazoie S. Predicting gene expression from sequence. Cell. 2004;117:185–198. doi: 10.1016/s0092-8674(04)00304-6. [DOI] [PubMed] [Google Scholar]

- 31.Pizzuti C, Talia D. P-autoclass: scalable parallel clustering for mining large data sets. IEEE Trans. Knowledg Data Eng. 2003;15:629–641. [Google Scholar]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.

Supplementary Materials

[Supplementary Data]