Policy Gradients with Parameter-Based Exploration for Control (original) (raw)

Abstract

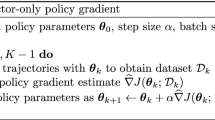

We present a model-free reinforcement learning method for partially observable Markov decision problems. Our method estimates a likelihood gradient by sampling directly in parameter space, which leads to lower variance gradient estimates than those obtained by policy gradient methods such as REINFORCE. For several complex control tasks, including robust standing with a humanoid robot, we show that our method outperforms well-known algorithms from the fields of policy gradients, finite difference methods and population based heuristics. We also provide a detailed analysis of the differences between our method and the other algorithms.

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

Policy Gradient

Chapter © 2020

References

- Benbrahim, H., Franklin, J.: Biped dynamic walking using reinforcement learning. Robotics and Autonomous Systems Journal (1997)

Google Scholar - Peters, J., Schaal, S.: Policy gradient methods for robotics. In: IROS-2006, Beijing, China, pp. 2219–2225 (2006)

Google Scholar - Schraudolph, N., Yu, J., Aberdeen, D.: Fast online policy gradient learning with smd gain vector adaptation. In: Weiss, Y., Schölkopf, B., Platt, J. (eds.) Advances in Neural Information Processing Systems, vol. 18. MIT Press, Cambridge (2006)

Google Scholar - Peters, J., Vijayakumar, S., Schaal, S.: Natural actor-critic. In: Gama, J., Camacho, R., Brazdil, P.B., Jorge, A.M., Torgo, L. (eds.) ECML 2005. LNCS (LNAI), vol. 3720, pp. 280–291. Springer, Heidelberg (2005)

Chapter Google Scholar - Williams, R.: Simple statistical gradient-following algorithms for connectionist reinforcement learning. Machine Learning 8, 229–256 (1992)

MATH Google Scholar - Baxter, J., Bartlett, P.L.: Reinforcement learning in POMDPs via direct gradient ascent. In: Proc. 17th International Conf. on Machine Learning, pp. 41–48. Morgan Kaufmann, San Francisco (2000)

Google Scholar - Aberdeen, D.: Policy-Gradient Algorithms for Partially Observable Markov Decision Processes. PhD thesis, Australian National University (2003)

Google Scholar - Sutton, R., McAllester, D., Singh, S., Mansour, Y.: Policy gradient methods for reinforcement learning with function approximation. In: NIPS 1999, pp. 1057–1063 (2000)

Google Scholar - Schwefel, H.: Evolution and optimum seeking. Wiley, New York (1995)

Google Scholar - Spall, J.: An overview of the simultaneous perturbation method for efficient optimization. Johns Hopkins APL Technical Digest 19(4), 482–492 (1998)

Google Scholar - Riedmiller, M., Peters, J., Schaal, S.: Evaluation of policy gradient methods and variants on the cart-pole benchmark. In: ADPRL 2007 (2007)

Google Scholar - Müller, H., Lauer, M., Hafner, R., Lange, S., Merke, A., Riedmiller, M.: Making a robot learn to play soccer. In: Hertzberg, J., Beetz, M., Englert, R. (eds.) KI 2007. LNCS (LNAI), vol. 4667, pp. 220–234. Springer, Heidelberg (2007)

Chapter Google Scholar - Jordan, M.: Attractor dynamics and parallelism in a connectionist sequential machine. In: Proc. of the Eighth Annual Conference of the Cognitive Science Society, vol. 8, pp. 531–546 (1986)

Google Scholar - Ulbrich, H.: Institute of Applied Mechanics, TU München, Germany (2008), http://www.amm.mw.tum.de/

- Hansen, N., Ostermeier, A.: Completely Derandomized Self-Adaptation in Evolution Strategies. Evolutionary Computation 9(2), 159–195 (2001)

Article Google Scholar

Author information

Authors and Affiliations

- Faculty of Computer Science, Technische Universität München, Germany

Frank Sehnke, Christian Osendorfer, Thomas Rückstieß, Alex Graves & Jürgen Schmidhuber - IDSIA, Manno-Lugano, Switzerland

Jürgen Schmidhuber - Max-Planck Institute for Biological Cybernetics Tübingen, Germany

Jan Peters

Authors

- Frank Sehnke

- Christian Osendorfer

- Thomas Rückstieß

- Alex Graves

- Jan Peters

- Jürgen Schmidhuber

Editor information

Véra Kůrková Roman Neruda Jan Koutník

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Sehnke, F., Osendorfer, C., Rückstieß, T., Graves, A., Peters, J., Schmidhuber, J. (2008). Policy Gradients with Parameter-Based Exploration for Control. In: Kůrková, V., Neruda, R., Koutník, J. (eds) Artificial Neural Networks - ICANN 2008. ICANN 2008. Lecture Notes in Computer Science, vol 5163. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-87536-9\_40

Download citation

- .RIS

- .ENW

- .BIB

- DOI: https://doi.org/10.1007/978-3-540-87536-9\_40

- Publisher Name: Springer, Berlin, Heidelberg

- Print ISBN: 978-3-540-87535-2

- Online ISBN: 978-3-540-87536-9

- eBook Packages: Computer ScienceComputer Science (R0)Springer Nature Proceedings Computer Science

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.