Policy and Research - Future of Life Institute (original) (raw)

We aim to improve the governance of artificial intelligence, and its intersection with biological, nuclear and cyberrisk.

Introduction

Improving the governance of transformative technologies

The policy team at FLI works to improve national and international governance of AI.

In 2017 we created the influential Asilomar AI principles, a set of governance principles signed by thousands of leading minds in AI research and industry. More recently, our 2023 open letter caused a global debate on the rightful place of AI in our societies. FLI regularly participates in intergovernmental conferences, and advises governments around the world on questions of AI governance.

Perspectives of Traditional Religions on Positive AI Futures

Most of the global population participates in a traditional religion. Yet the perspectives of these religions are largely absent from strategic AI discussions. This initiative aims to support religious groups to voice their faith-specific concerns and hopes for a world with AI, and work with them to resist the harms and realise the benefits.

Promoting a Global AI Agreement

We need international coordination so that AI's benefits reach across the globe, not just concentrate in a few places. The risks of advanced AI won't stay within borders, but will spread globally and affect everyone. We should work towards an international governance framework that prevents the concentration of benefits in a few places and mitigates global risks of advanced AI.

Recommendations for the U.S. AI Action Plan

The Future of Life Institute proposal for President Trump’s AI Action Plan. Our recommendations aim to protect the presidency from AI loss-of-control, promote the development of AI systems free from ideological or social agendas, protect American workers from job loss and replacement, and more.

AI Safety Summits

Governments are exploring collaboration on navigating a world with advanced AI. FLI provides them with advice and support.

Implementing the European AI Act

Our key recommendations include broadening the Act’s scope to regulate general purpose systems and extending the definition of prohibited manipulation to include any type of manipulatory technique, and manipulation that causes societal harm.

Educating about Autonomous Weapons

Military AI applications are rapidly expanding. We develop educational materials about how certain narrow classes of AI-powered weapons can harm national security and destabilize civilization, notably weapons where kill decisions are fully delegated to algorithms.

Our content

Latest policy and research papers

Produced by us

Feedback on the Draft Implementing Regulation on Evaluations and Enforcement Proceedings under the AI Act

April 2026

NIST RFI Response: Security Considerations for Artificial Intelligence Agents

March 2026

FLI’s Recommendations for the AI Impact Summit

February 2026

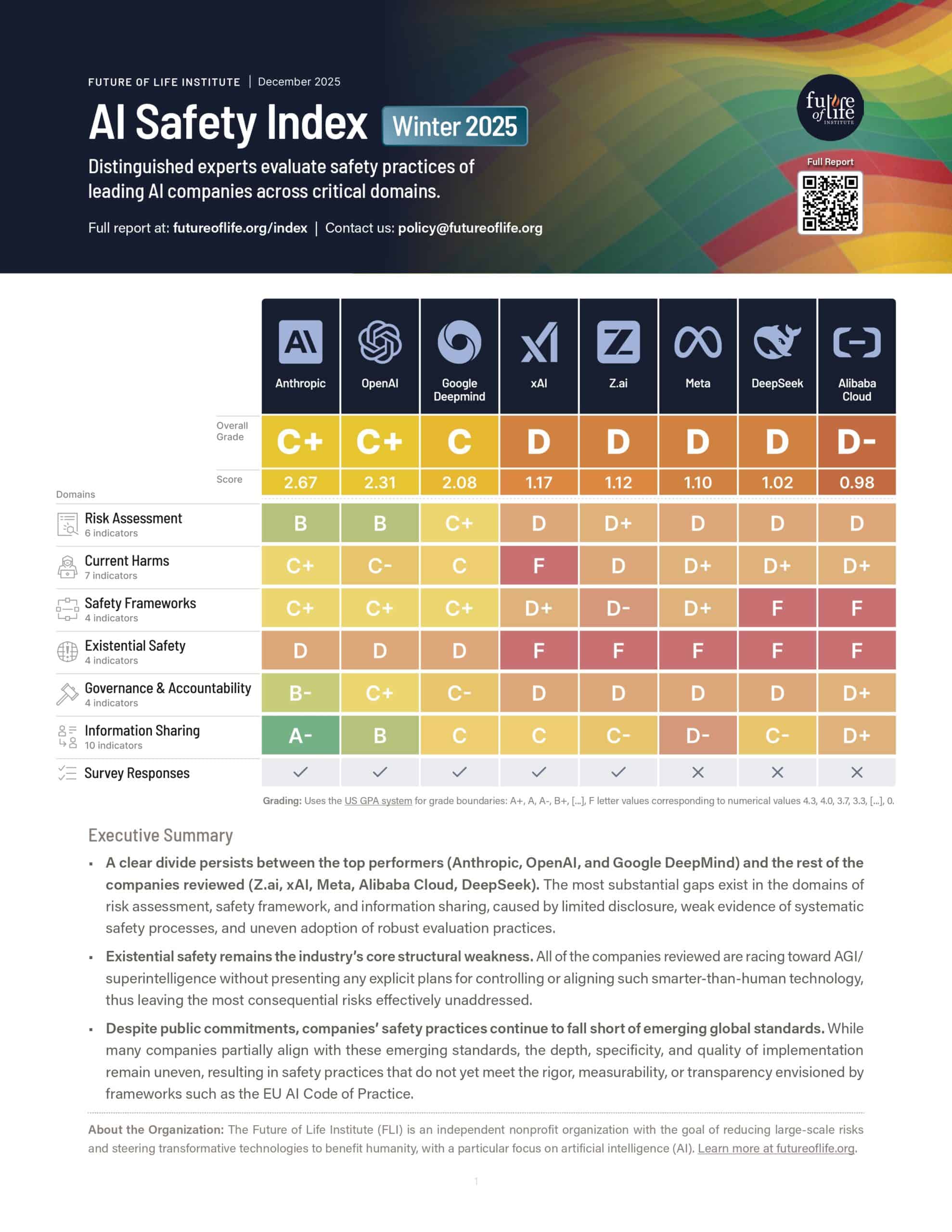

AI Safety Index: Winter 2025 (2-Page Summary)

December 2025

Featuring our staff and fellows

AI Benefit-Sharing Framework: Balancing Access and Safety

Sumaya Nur Adan, Joanna Wiaterek, Varun Sen Bahl, Ima Bello, Luise Eder, José Jaime Villalobos, Anna Yelizarova, et al.

December 2025

Examining Popular Arguments Against AI Existential Risk: A Philosophical Analysis

Torben Swoboda, Risto Uuk, Lode Lauwaert, Andrew Peter Rebera, Ann-Katrien Oimann, Bartlomiej Chomanski, Carina Prunkl

November 2025

A Blueprint for Multinational Advanced AI Development

Adrien Abecassis, Jonathan Barry, Ima Bello, Yoshua Bengio, Antonin Bergeaud, Yann Bonnet, Philipp Hacker, Ben Harack, Sophia Hatz, et al.

November 2025

Looking ahead: Synergies between the EU AI Office and UK AISI

Lara Thurnherr, Risto Uuk, Tekla Emborg, Marta Ziosi, Isabella Wilkinson, Morgan Simpson, Renan Araujo and Charles Martinet

March 2025

Load more

Resources

We provide high-quality policy resources to support policymakers

Autonomous Weapons website

The era in which algorithms decide who lives and who dies is upon us. We must act now to prohibit and regulate these weapons.

Geographical Focus

Where you can find us

We are a hybrid organisation. Most of our policy work takes place in the US (D.C. and California), the EU (Brussels) and at the UN (New York and Geneva).

United States

In the US, FLI participates in the US AI Safety Institute consortium and promotes AI legislation at state and federal levels.

European Union

In Europe, our focus is on strong EU AI Act implementation and encouraging European states to support a treaty on autonomous weapons.

United Nations

At the UN, FLI advocates for a treaty on autonomous weapons and a new international agency to govern AI.

Our content

Featured posts

Here is a selection of posts relating to our policy work:

Contact us

Let's put you in touch with the right person.

We do our best to respond to all incoming queries within three business days. Our team is spread across the globe, so please be considerate and remember that the person you are contacting may not be in your timezone.

Please direct media requests and speaking invitations for Max Tegmark to press@futureoflife.org. All other inquiries can be sent to contact@futureoflife.org.