GitHub - g0t4/mcp-server-commands: Model Context Protocol server to run commands (original) (raw)

Tools

Tools are for LLMs to request. Claude Sonnet 3.5 intelligently uses run_command. And, initial testing shows promising results with Groq Desktop with MCP and llama4 models.

Currently, just one command to rule them all!

run_command- run a command, i.e.hostnameorls -alorecho "hello world"etc- Returns

STDOUTandSTDERRas text - Optional

stdinparameter means your LLM can

* pass code instdinto commands likefish,bash,zsh,python

* create files withcat >> foo/bar.txtfrom the text instdin

- Returns

Warning

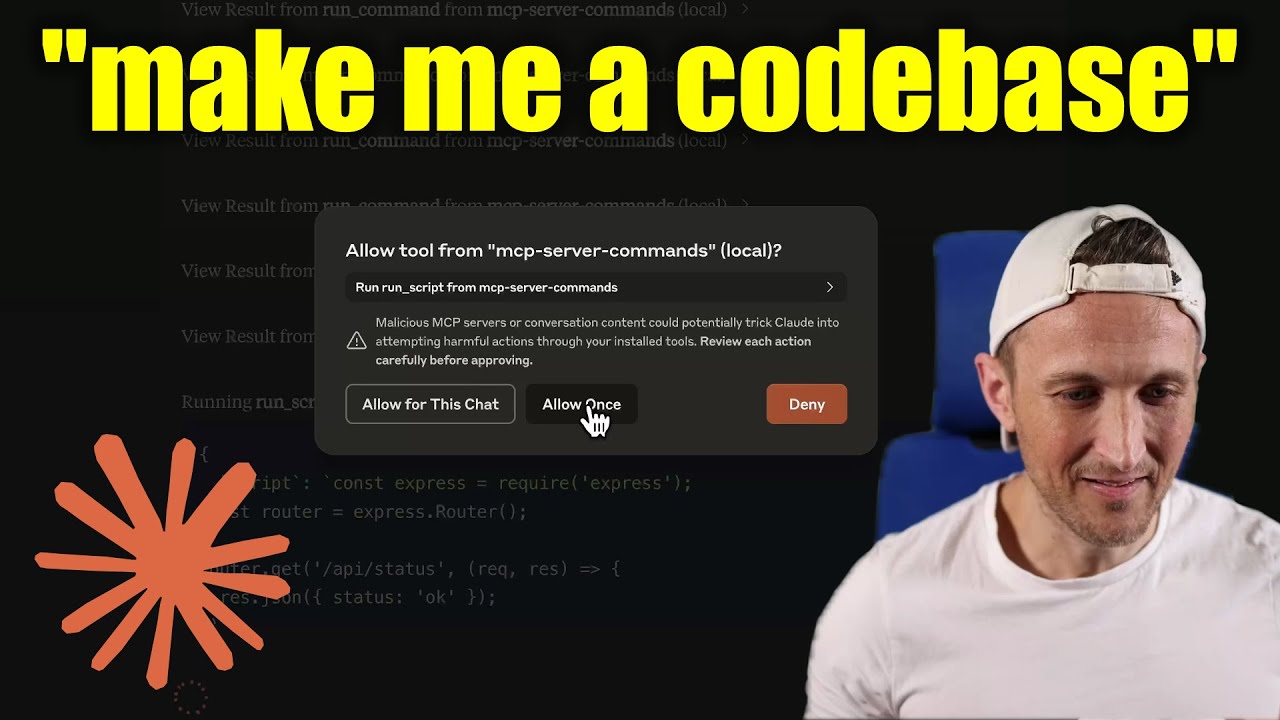

Be careful what you ask this server to run! In Claude Desktop app, use Approve Once (not Allow for This Chat) so you can review each command, use Deny if you don't trust the command. Permissions are dictated by the user that runs the server. DO NOT run with sudo.

Video walkthrough

Prompts

Prompts are for users to include in chat history, i.e. via Zed's slash commands (in its AI Chat panel)

run_command- generate a prompt message with the command output

Development

Install dependencies:

Build the server:

For development with auto-rebuild:

Installation

To use with Claude Desktop, add the server config:

On MacOS: ~/Library/Application Support/Claude/claude_desktop_config.jsonOn Windows: %APPDATA%/Claude/claude_desktop_config.json

Groq Desktop (beta, macOS) uses ~/Library/Application Support/groq-desktop-app/settings.json

Use the published npm package

Published to npm as mcp-server-commands using this workflow

{ "mcpServers": { "mcp-server-commands": { "command": "npx", "args": ["mcp-server-commands"] } } }

Use a local build (repo checkout)

Make sure to run npm run build

{ "mcpServers": { "mcp-server-commands": { // works b/c of shebang in index.js "command": "/path/to/mcp-server-commands/build/index.js" } } }

Local Models

- Most models are trained such that they don't think they can run commands for you.

- Sometimes, they use tools w/o hesitation... other times, I have to coax them.

- Use a system prompt or prompt template to instruct that they should follow user requests. Including to use

run_commandswithout double checking.

- Ollama is a great way to run a model locally (w/ Open-WebUI)

NOTE: make sure to review variants and sizes, so the model fits in your VRAM to perform well!

Probably the best so far is OpenHands LM

ollama pull https://huggingface.co/lmstudio-community/openhands-lm-32b-v0.1-GGUF

https://ollama.com/library/devstral

ollama pull devstral

Qwen2.5-Coder has tool use but you have to coax it

ollama pull qwen2.5-coder

HTTP / OpenAPI

The server is implemented with the STDIO transport. For HTTP, use mcpo for an OpenAPI compatible web server interface. This works with Open-WebUI

uvx mcpo --port 3010 --api-key "supersecret" -- npx mcp-server-commands

uvx runs mcpo => mcpo run npx => npx runs mcp-server-commands

then, mcpo bridges STDIO <=> HTTP

Warning

I briefly used mcpo with open-webui, make sure to vet it for security concerns.

Logging

Claude Desktop app writes logs to ~/Library/Logs/Claude/mcp-server-mcp-server-commands.log

By default, only important messages are logged (i.e. errors). If you want to see more messages, add --verbose to the args when configuring the server.

By the way, logs are written to STDERR because that is what Claude Desktop routes to the log files. In the future, I expect well formatted log messages to be written over the STDIO transport to the MCP client (note: not Claude Desktop app).

Debugging

Since MCP servers communicate over stdio, debugging can be challenging. We recommend using the MCP Inspector, which is available as a package script:

The Inspector will provide a URL to access debugging tools in your browser.