How2Sign Dataset (original) (raw)

How2Sign Dataset

Continuous American Sign Language Multiview videos Depth data 2D & 3D skeletons Gloss annotation English translation

First large-scale multimodal and multiview continuous American Sign Language dataset

Download Sample " "Download Full Dataset

ABOUT

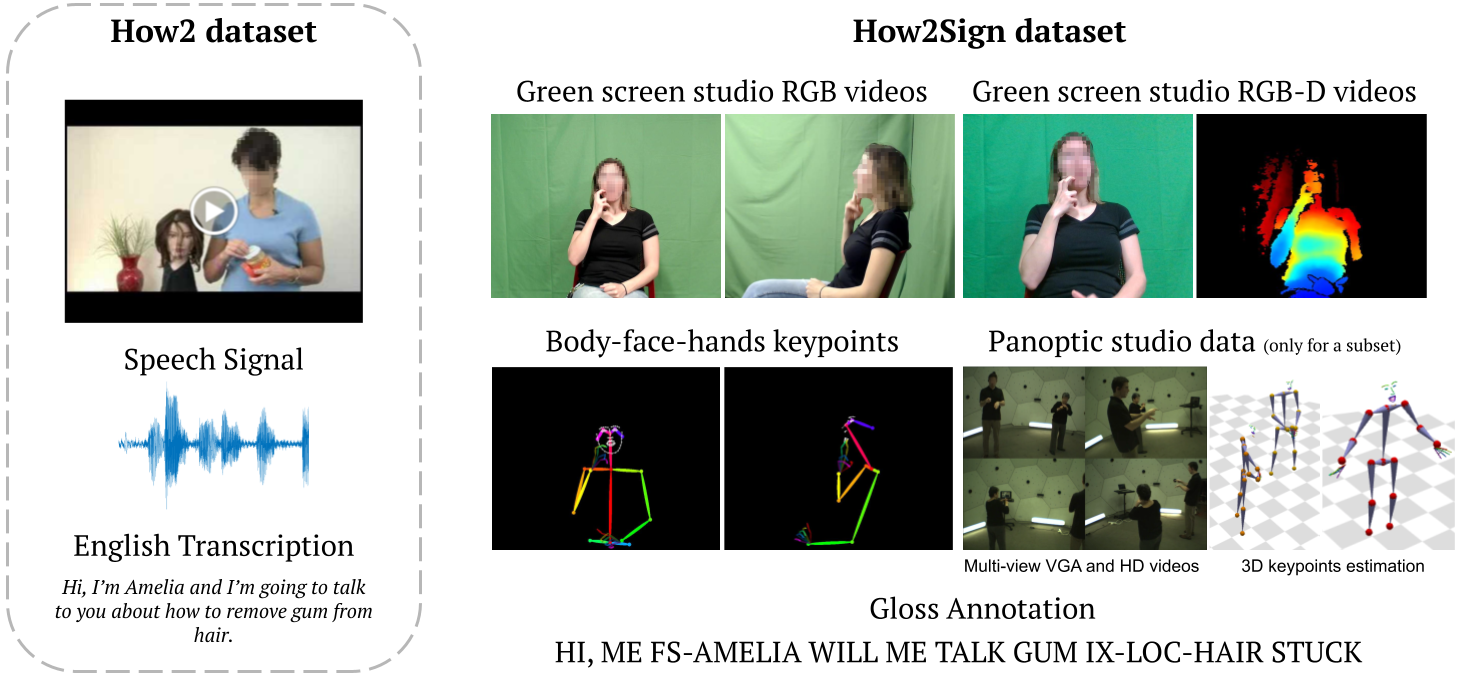

We introduce How2Sign, a multimodal and multiview continuous American Sign Language (ASL) dataset, consisting of a parallel corpus of more than 80 hours of sign language videos and a set of corresponding modalities including speech, English transcripts, and depth.

A three-hour subset was further recorded in the Panoptic studio enabling detailed 3D pose estimation.

This dataset is publicly available for research purposes only.

Download

This section is under construction.

We will be releasing the other modalities soon!

The dataset is publicly available for research purposes only.

Download the videos, annotations and metadata separately

You can also use our script to automatic create the folder structure and download the necessary modalities

Green Screen RGB videos (frontal view)

Green Screen RGB videos (side view)

Green Screen RGB clips* (frontal view)

Green Screen RGB clips* (side view)

B-F-H 2D Keypoints clips* (frontal view)

English Translation (original)

English Translation (manually re-aligned)

Publication

How2Sign: A Large-scale Multimodal Dataset for Continuous American Sign Language

Amanda Duarte,Shruti Palaskar,Lucas Ventura,Deepti Ghadiyaram,Kenneth DeHaan,Florian Metze,Jordi Torres, andXavier Giró-i-Nieto

CVPR, 2021

[PDF] [1' video] [Poster]

When using the How2Sign Dataset please reference:

@inproceedings{Duarte_CVPR2021,

title={{How2Sign: A Large-scale Multimodal Dataset for Continuous American Sign Language}},

author={Duarte, Amanda and Palaskar, Shruti and Ventura, Lucas and Ghadiyaram, Deepti and DeHaan, Kenneth and

Metze, Florian and Torres, Jordi and Giro-i-Nieto, Xavier},

booktitle={Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2021}

}