Generated Knowledge Prompting | Prompt Engineering Guide (original) (raw)

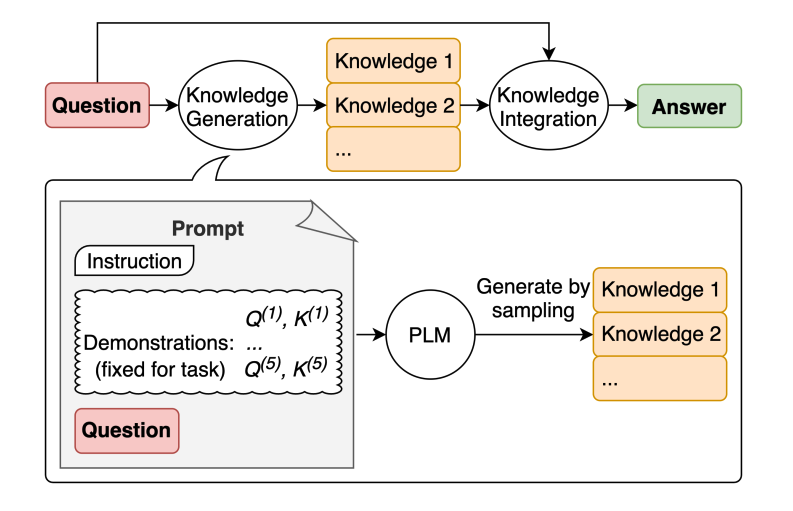

Image Source: Liu et al. 2022 (opens in a new tab)

LLMs continue to be improved and one popular technique includes the ability to incorporate knowledge or information to help the model make more accurate predictions.

Using a similar idea, can the model also be used to generate knowledge before making a prediction? That's what is attempted in the paper by Liu et al. 2022 (opens in a new tab) -- generate knowledge to be used as part of the prompt. In particular, how helpful is this for tasks such as commonsense reasoning?

Let's try a simple prompt:

Prompt:

Output:

This type of mistake reveals the limitations of LLMs to perform tasks that require more knowledge about the world. How do we improve this with knowledge generation?

First, we generate a few "knowledges":

Prompt:

Knowledge 1:

Knowledge 2:

We are using the prompt provided in the paper by Liu et al. 2022 (opens in a new tab).

The next step is to integrate the knowledge and get a prediction. I reformatted the question into QA format to guide the answer format.

Prompt:

Answer 1 (confidence very high):

Answer 2 (confidence is a lot lower):

Some really interesting things happened with this example. In the first answer, the model was very confident but in the second not so much. I simplified the process for demonstration purposes but there are a few more details to consider when arriving at the final answer. Check out the paper for more.

Related Learning

Explore All Courses

Discover our full catalog of AI and prompt engineering courses. From beginners to advanced practitioners.Use code PROMPTING20 for 20% off!