Adding sensors on a robot — Isaac Lab Documentation (original) (raw)

Adding sensors on a robot#

While the asset classes allow us to create and simulate the physical embodiment of the robot, sensors help in obtaining information about the environment. They typically update at a lower frequency than the simulation and are useful for obtaining different proprioceptive and exteroceptive information. For example, a camera sensor can be used to obtain the visual information of the environment, and a contact sensor can be used to obtain the contact information of the robot with the environment.

In this tutorial, we will see how to add different sensors to a robot. We will use the ANYmal-C robot for this tutorial. The ANYmal-C robot is a quadrupedal robot with 12 degrees of freedom. It has 4 legs, each with 3 degrees of freedom. The robot has the following sensors:

- A camera sensor on the head of the robot which provides RGB-D images

- A height scanner sensor that provides terrain height information

- Contact sensors on the feet of the robot that provide contact information

We continue this tutorial from the previous tutorial on Using the Interactive Scene, where we learned about the scene.InteractiveScene class.

The Code#

The tutorial corresponds to the add_sensors_on_robot.py script in thescripts/tutorials/04_sensors directory.

1# Copyright (c) 2022-2025, The Isaac Lab Project Developers. 2# All rights reserved. 3# 4# SPDX-License-Identifier: BSD-3-Clause 5 6""" 7This script demonstrates how to add and simulate on-board sensors for a robot. 8 9We add the following sensors on the quadruped robot, ANYmal-C (ANYbotics): 10 11* USD-Camera: This is a camera sensor that is attached to the robot's base. 12* Height Scanner: This is a height scanner sensor that is attached to the robot's base. 13* Contact Sensor: This is a contact sensor that is attached to the robot's feet. 14 15.. code-block:: bash 16 17 # Usage 18 ./isaaclab.sh -p scripts/tutorials/04_sensors/add_sensors_on_robot.py --enable_cameras 19 20""" 21 22"""Launch Isaac Sim Simulator first.""" 23 24import argparse 25 26from isaaclab.app import AppLauncher 27 28# add argparse arguments 29parser = argparse.ArgumentParser(description="Tutorial on adding sensors on a robot.") 30parser.add_argument("--num_envs", type=int, default=2, help="Number of environments to spawn.") 31# append AppLauncher cli args 32AppLauncher.add_app_launcher_args(parser) 33# parse the arguments 34args_cli = parser.parse_args() 35 36# launch omniverse app 37app_launcher = AppLauncher(args_cli) 38simulation_app = app_launcher.app 39 40"""Rest everything follows.""" 41 42import torch 43 44import isaaclab.sim as sim_utils 45from isaaclab.assets import ArticulationCfg, AssetBaseCfg 46from isaaclab.scene import InteractiveScene, InteractiveSceneCfg 47from isaaclab.sensors import CameraCfg, ContactSensorCfg, RayCasterCfg, patterns 48from isaaclab.utils import configclass 49 50## 51# Pre-defined configs 52## 53from isaaclab_assets.robots.anymal import ANYMAL_C_CFG # isort: skip 54 55 56@configclass 57class SensorsSceneCfg(InteractiveSceneCfg): 58 """Design the scene with sensors on the robot.""" 59 60 # ground plane 61 ground = AssetBaseCfg(prim_path="/World/defaultGroundPlane", spawn=sim_utils.GroundPlaneCfg()) 62 63 # lights 64 dome_light = AssetBaseCfg( 65 prim_path="/World/Light", spawn=sim_utils.DomeLightCfg(intensity=3000.0, color=(0.75, 0.75, 0.75)) 66 ) 67 68 # robot 69 robot: ArticulationCfg = ANYMAL_C_CFG.replace(prim_path="{ENV_REGEX_NS}/Robot") 70 71 # sensors 72 camera = CameraCfg( 73 prim_path="{ENV_REGEX_NS}/Robot/base/front_cam", 74 update_period=0.1, 75 height=480, 76 width=640, 77 data_types=["rgb", "distance_to_image_plane"], 78 spawn=sim_utils.PinholeCameraCfg( 79 focal_length=24.0, focus_distance=400.0, horizontal_aperture=20.955, clipping_range=(0.1, 1.0e5) 80 ), 81 offset=CameraCfg.OffsetCfg(pos=(0.510, 0.0, 0.015), rot=(0.5, -0.5, 0.5, -0.5), convention="ros"), 82 ) 83 height_scanner = RayCasterCfg( 84 prim_path="{ENV_REGEX_NS}/Robot/base", 85 update_period=0.02, 86 offset=RayCasterCfg.OffsetCfg(pos=(0.0, 0.0, 20.0)), 87 attach_yaw_only=True, 88 pattern_cfg=patterns.GridPatternCfg(resolution=0.1, size=[1.6, 1.0]), 89 debug_vis=True, 90 mesh_prim_paths=["/World/defaultGroundPlane"], 91 ) 92 contact_forces = ContactSensorCfg( 93 prim_path="{ENV_REGEX_NS}/Robot/.*_FOOT", update_period=0.0, history_length=6, debug_vis=True 94 ) 95 96 97def run_simulator(sim: sim_utils.SimulationContext, scene: InteractiveScene): 98 """Run the simulator.""" 99 # Define simulation stepping 100 sim_dt = sim.get_physics_dt() 101 sim_time = 0.0 102 count = 0 103 104 # Simulate physics 105 while simulation_app.is_running(): 106 # Reset 107 if count % 500 == 0: 108 # reset counter 109 count = 0 110 # reset the scene entities 111 # root state 112 # we offset the root state by the origin since the states are written in simulation world frame 113 # if this is not done, then the robots will be spawned at the (0, 0, 0) of the simulation world 114 root_state = scene["robot"].data.default_root_state.clone() 115 root_state[:, :3] += scene.env_origins 116 scene["robot"].write_root_pose_to_sim(root_state[:, :7]) 117 scene["robot"].write_root_velocity_to_sim(root_state[:, 7:]) 118 # set joint positions with some noise 119 joint_pos, joint_vel = ( 120 scene["robot"].data.default_joint_pos.clone(), 121 scene["robot"].data.default_joint_vel.clone(), 122 ) 123 joint_pos += torch.rand_like(joint_pos) * 0.1 124 scene["robot"].write_joint_state_to_sim(joint_pos, joint_vel) 125 # clear internal buffers 126 scene.reset() 127 print("[INFO]: Resetting robot state...") 128 # Apply default actions to the robot 129 # -- generate actions/commands 130 targets = scene["robot"].data.default_joint_pos 131 # -- apply action to the robot 132 scene["robot"].set_joint_position_target(targets) 133 # -- write data to sim 134 scene.write_data_to_sim() 135 # perform step 136 sim.step() 137 # update sim-time 138 sim_time += sim_dt 139 count += 1 140 # update buffers 141 scene.update(sim_dt) 142 143 # print information from the sensors 144 print("-------------------------------") 145 print(scene["camera"]) 146 print("Received shape of rgb image: ", scene["camera"].data.output["rgb"].shape) 147 print("Received shape of depth image: ", scene["camera"].data.output["distance_to_image_plane"].shape) 148 print("-------------------------------") 149 print(scene["height_scanner"]) 150 print("Received max height value: ", torch.max(scene["height_scanner"].data.ray_hits_w[..., -1]).item()) 151 print("-------------------------------") 152 print(scene["contact_forces"]) 153 print("Received max contact force of: ", torch.max(scene["contact_forces"].data.net_forces_w).item()) 154 155 156def main(): 157 """Main function.""" 158 159 # Initialize the simulation context 160 sim_cfg = sim_utils.SimulationCfg(dt=0.005, device=args_cli.device) 161 sim = sim_utils.SimulationContext(sim_cfg) 162 # Set main camera 163 sim.set_camera_view(eye=[3.5, 3.5, 3.5], target=[0.0, 0.0, 0.0]) 164 # design scene 165 scene_cfg = SensorsSceneCfg(num_envs=args_cli.num_envs, env_spacing=2.0) 166 scene = InteractiveScene(scene_cfg) 167 # Play the simulator 168 sim.reset() 169 # Now we are ready! 170 print("[INFO]: Setup complete...") 171 # Run the simulator 172 run_simulator(sim, scene) 173 174 175if name == "main": 176 # run the main function 177 main() 178 # close sim app 179 simulation_app.close()

The Code Explained#

Similar to the previous tutorials, where we added assets to the scene, the sensors are also added to the scene using the scene configuration. All sensors inherit from the sensors.SensorBase class and are configured through their respective config classes. Each sensor instance can define its own update period, which is the frequency at which the sensor is updated. The update period is specified in seconds through the sensors.SensorBaseCfg.update_period attribute.

Depending on the specified path and the sensor type, the sensors are attached to the prims in the scene. They may have an associated prim that is created in the scene or they may be attached to an existing prim. For instance, the camera sensor has a corresponding prim that is created in the scene, whereas for the contact sensor, the activating the contact reporting is a property on a rigid body prim.

In the following, we introduce the different sensors we use in this tutorial and how they are configured. For more description about them, please check the sensors module.

Camera sensor#

A camera is defined using the sensors.CameraCfg. It is based on the USD Camera sensor and the different data types are captured using Omniverse Replicator API. Since it has a corresponding prim in the scene, the prims are created in the scene at the specified prim path.

The configuration of the camera sensor includes the following parameters:

- spawn: The type of USD camera to create. This can be eitherPinholeCameraCfg or FisheyeCameraCfg.

- offset: The offset of the camera sensor from the parent prim.

- data_types: The data types to capture. This can be

rgb,distance_to_image_plane,normals, or other types supported by the USD Camera sensor.

To attach an RGB-D camera sensor to the head of the robot, we specify an offset relative to the base frame of the robot. The offset is specified as a translation and rotation relative to the base frame, and the convention in which the offset is specified.

In the following, we show the configuration of the camera sensor used in this tutorial. We set the update period to 0.1s, which means that the camera sensor is updated at 10Hz. The prim path expression is set to {ENV_REGEX_NS}/Robot/base/front_cam where the {ENV_REGEX_NS} is the environment namespace,"Robot" is the name of the robot, "base" is the name of the prim to which the camera is attached, and "front_cam" is the name of the prim associated with the camera sensor.

camera = CameraCfg(

prim_path="{ENV_REGEX_NS}/Robot/base/front_cam",

update_period=0.1,

height=480,

width=640,

data_types=["rgb", "distance_to_image_plane"],

spawn=sim_utils.PinholeCameraCfg(

focal_length=24.0, focus_distance=400.0, horizontal_aperture=20.955, clipping_range=(0.1, 1.0e5)

),

offset=CameraCfg.OffsetCfg(pos=(0.510, 0.0, 0.015), rot=(0.5, -0.5, 0.5, -0.5), convention="ros"),

)Height scanner#

The height-scanner is implemented as a virtual sensor using the NVIDIA Warp ray-casting kernels. Through the sensors.RayCasterCfg, we can specify the pattern of rays to cast and the meshes against which to cast the rays. Since they are virtual sensors, there is no corresponding prim created in the scene for them. Instead they are attached to a prim in the scene, which is used to specify the location of the sensor.

For this tutorial, the ray-cast based height scanner is attached to the base frame of the robot. The pattern of rays is specified using the pattern attribute. For a uniform grid pattern, we specify the pattern using GridPatternCfg. Since we only care about the height information, we do not need to consider the roll and pitch of the robot. Hence, we set the attach_yaw_only to true.

For the height-scanner, you can visualize the points where the rays hit the mesh. This is done by setting the debug_vis attribute to true.

The entire configuration of the height-scanner is as follows:

height_scanner = RayCasterCfg(

prim_path="{ENV_REGEX_NS}/Robot/base",

update_period=0.02,

offset=RayCasterCfg.OffsetCfg(pos=(0.0, 0.0, 20.0)),

attach_yaw_only=True,

pattern_cfg=patterns.GridPatternCfg(resolution=0.1, size=[1.6, 1.0]),

debug_vis=True,

mesh_prim_paths=["/World/defaultGroundPlane"],

)Contact sensor#

Contact sensors wrap around the PhysX contact reporting API to obtain the contact information of the robot with the environment. Since it relies of PhysX, the contact sensor expects the contact reporting API to be enabled on the rigid bodies of the robot. This can be done by setting theactivate_contact_sensors to true in the asset configuration.

Through the sensors.ContactSensorCfg, it is possible to specify the prims for which we want to obtain the contact information. Additional flags can be set to obtain more information about the contact, such as the contact air time, contact forces between filtered prims, etc.

In this tutorial, we attach the contact sensor to the feet of the robot. The feet of the robot are named "LF_FOOT", "RF_FOOT", "LH_FOOT", and "RH_FOOT". We pass a Regex expression".*_FOOT" to simplify the prim path specification. This Regex expression matches all prims that end with "_FOOT".

We set the update period to 0 to update the sensor at the same frequency as the simulation. Additionally, for contact sensors, we can specify the history length of the contact information to store. For this tutorial, we set the history length to 6, which means that the contact information for the last 6 simulation steps is stored.

The entire configuration of the contact sensor is as follows:

contact_forces = ContactSensorCfg(

prim_path="{ENV_REGEX_NS}/Robot/.*_FOOT", update_period=0.0, history_length=6, debug_vis=True

)Running the simulation loop#

Similar to when using assets, the buffers and physics handles for the sensors are initialized only when the simulation is played, i.e., it is important to call sim.reset() after creating the scene.

# Play the simulator

sim.reset()Besides that, the simulation loop is similar to the previous tutorials. The sensors are updated as part of the scene update and they internally handle the updating of their buffers based on their update periods.

The data from the sensors can be accessed through their data attribute. As an example, we show how to access the data for the different sensors created in this tutorial:

# print information from the sensors

print("-------------------------------")

print(scene["camera"])

print("Received shape of rgb image: ", scene["camera"].data.output["rgb"].shape)

print("Received shape of depth image: ", scene["camera"].data.output["distance_to_image_plane"].shape)

print("-------------------------------")

print(scene["height_scanner"])

print("Received max height value: ", torch.max(scene["height_scanner"].data.ray_hits_w[..., -1]).item())

print("-------------------------------")

print(scene["contact_forces"])

print("Received max contact force of: ", torch.max(scene["contact_forces"].data.net_forces_w).item())The Code Execution#

Now that we have gone through the code, let’s run the script and see the result:

./isaaclab.sh -p scripts/tutorials/04_sensors/add_sensors_on_robot.py --num_envs 2 --enable_cameras

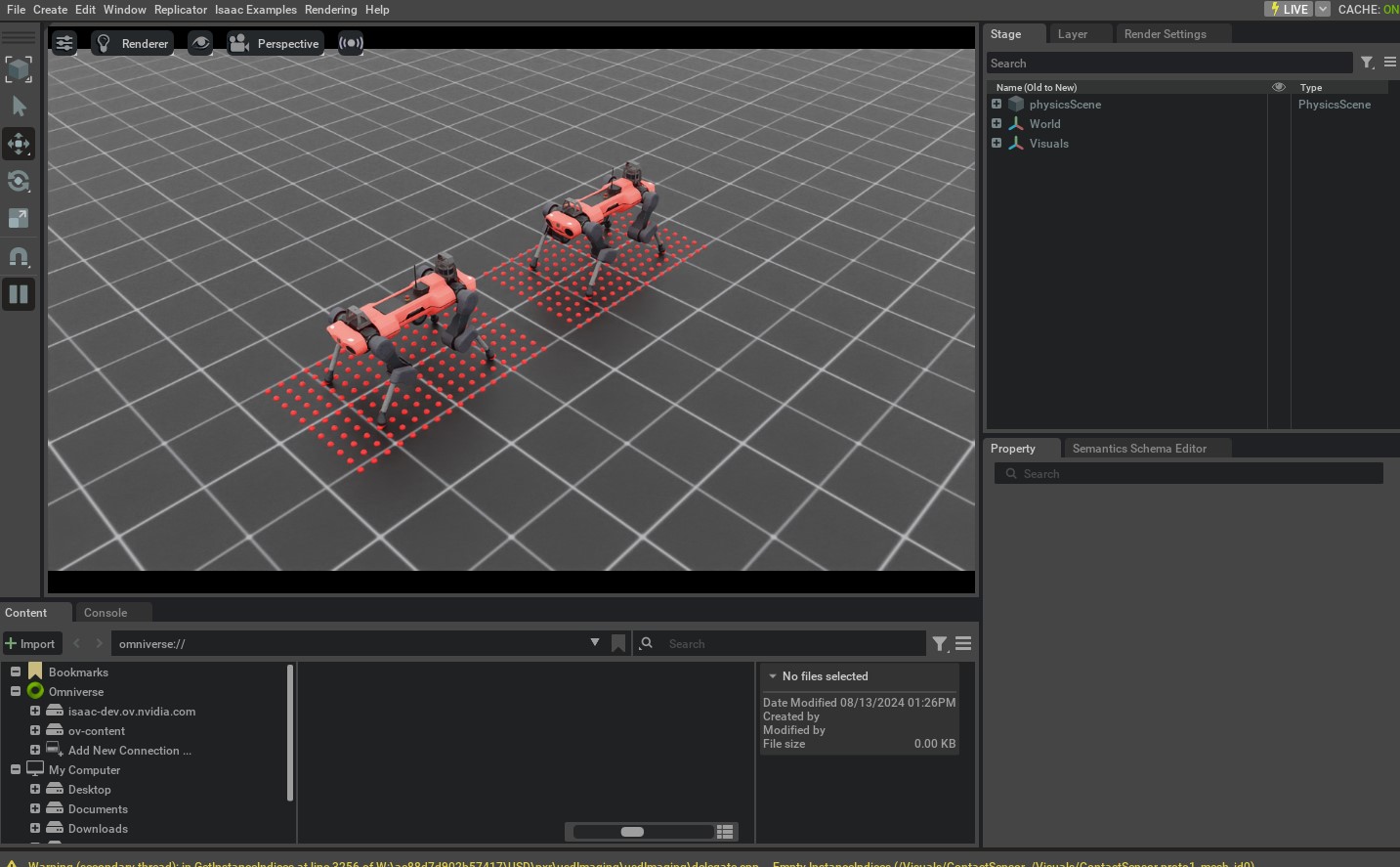

This command should open a stage with a ground plane, lights, and two quadrupedal robots. Around the robots, you should see red spheres that indicate the points where the rays hit the mesh. Additionally, you can switch the viewport to the camera view to see the RGB image captured by the camera sensor. Please check here for more information on how to switch the viewport to the camera view.

To stop the simulation, you can either close the window, or press Ctrl+C in the terminal.

While in this tutorial, we went over creating and using different sensors, there are many more sensors available in the sensors module. We include minimal examples of using these sensors in thescripts/tutorials/04_sensors directory. For completeness, these scripts can be run using the following commands:

Frame Transformer

./isaaclab.sh -p scripts/tutorials/04_sensors/run_frame_transformer.py

Ray Caster

./isaaclab.sh -p scripts/tutorials/04_sensors/run_ray_caster.py

Ray Caster Camera

./isaaclab.sh -p scripts/tutorials/04_sensors/run_ray_caster_camera.py

USD Camera

./isaaclab.sh -p scripts/tutorials/04_sensors/run_usd_camera.py --enable_cameras