LeakyReLU — PyTorch 2.7 documentation (original) (raw)

class torch.nn.LeakyReLU(negative_slope=0.01, inplace=False)[source][source]¶

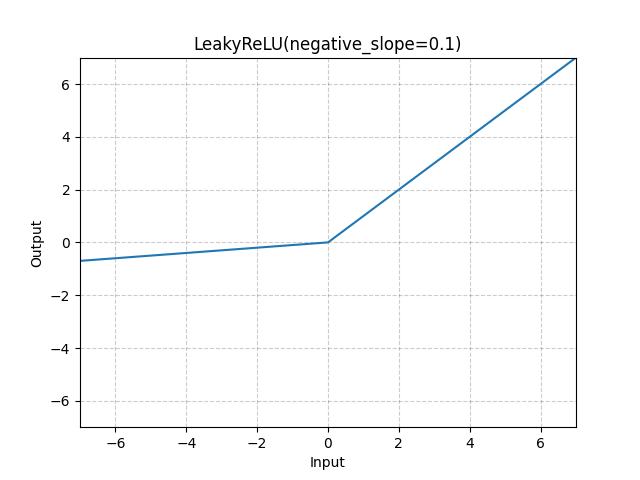

Applies the LeakyReLU function element-wise.

LeakyReLU(x)=max(0,x)+negative_slope∗min(0,x)\text{LeakyReLU}(x) = \max(0, x) + \text{negative\_slope} * \min(0, x)

or

LeakyReLU(x)={x, if x≥0negative_slope×x, otherwise \text{LeakyReLU}(x) = \begin{cases} x, & \text{ if } x \geq 0 \\ \text{negative\_slope} \times x, & \text{ otherwise } \end{cases}

Parameters

- negative_slope (float) – Controls the angle of the negative slope (which is used for negative input values). Default: 1e-2

- inplace (bool) – can optionally do the operation in-place. Default:

False

Shape:

- Input: (∗)(*) where * means, any number of additional dimensions

- Output: (∗)(*), same shape as the input

Examples:

m = nn.LeakyReLU(0.1) input = torch.randn(2) output = m(input)