Mish — PyTorch 2.7 documentation (original) (raw)

class torch.nn.Mish(inplace=False)[source][source]¶

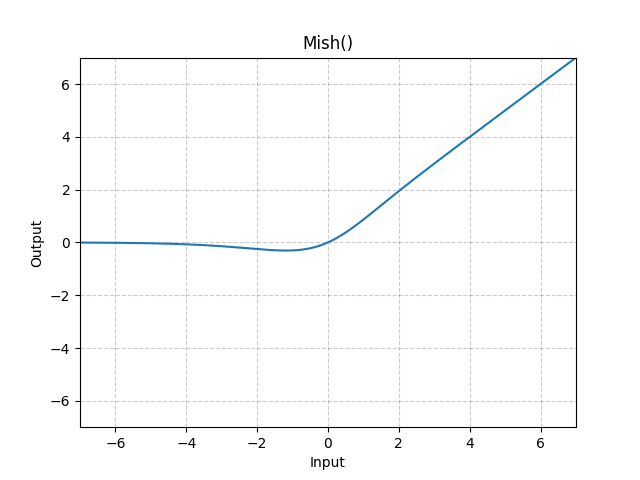

Applies the Mish function, element-wise.

Mish: A Self Regularized Non-Monotonic Neural Activation Function.

Mish(x)=x∗Tanh(Softplus(x))\text{Mish}(x) = x * \text{Tanh}(\text{Softplus}(x))

Shape:

- Input: (∗)(*), where ∗* means any number of dimensions.

- Output: (∗)(*), same shape as the input.

Examples:

m = nn.Mish() input = torch.randn(2) output = m(input)

To analyze traffic and optimize your experience, we serve cookies on this site. By clicking or navigating, you agree to allow our usage of cookies. As the current maintainers of this site, Facebook’s Cookies Policy applies. Learn more, including about available controls: Cookies Policy.