Take action - Future of Life Institute (original) (raw)

Watch the video

What you need to know about AI today

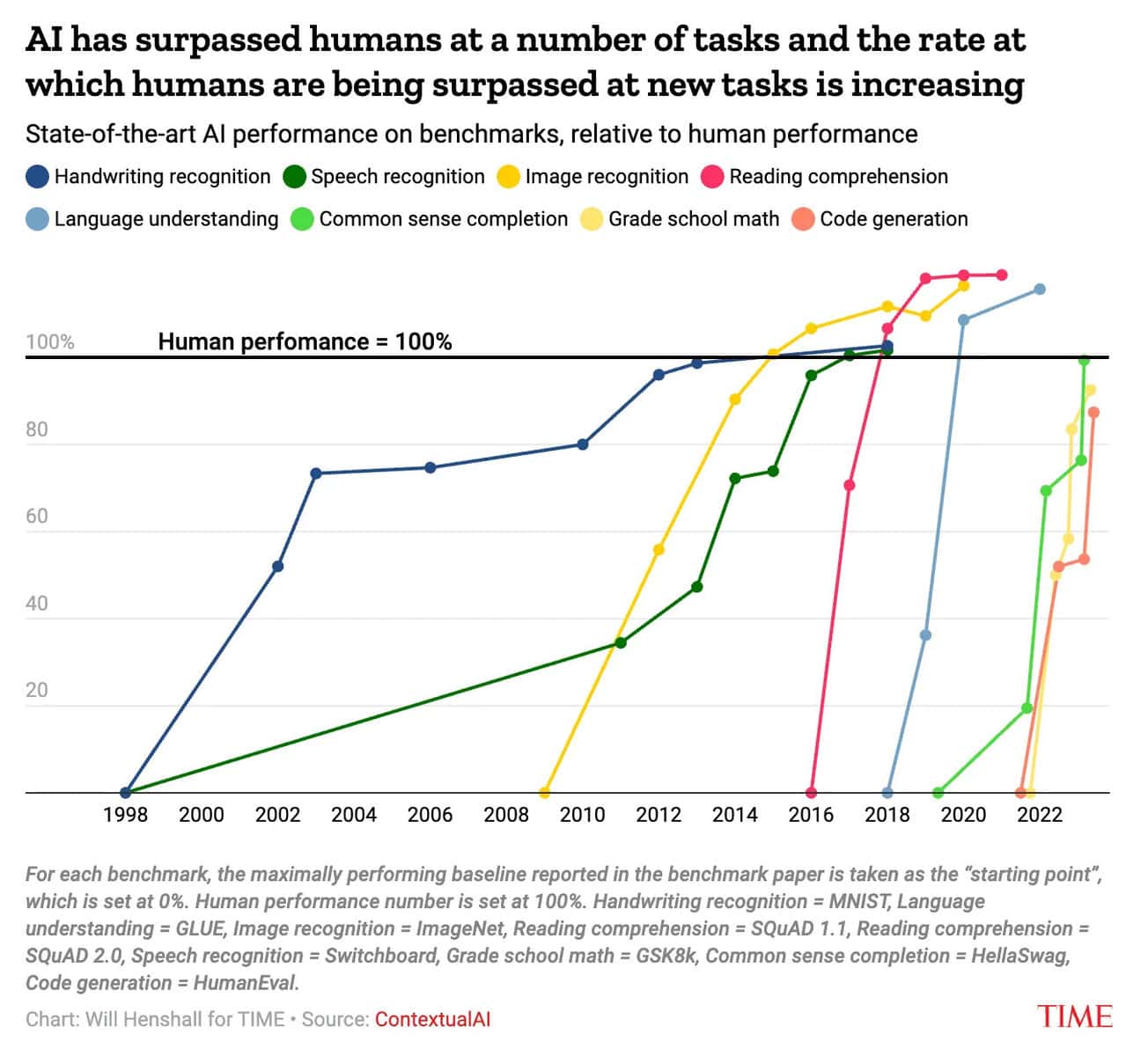

AI capabilities are advancing dramatically

…and accelerating.

In just the last couple of years, AI models have learned to impersonate humans, generate life-like audio, images, and videos, beat the world’s best coders at competitive coding challenges, and perform independent research. Every few months, AI systems unlock new capabilities - and accompanying risks.

As Big Tech companies spend more money and build ever-larger data centers, we can expect AI capabilities to keep accelerating.

Big Tech companies are explicitly building AI to replace humankind

…and they may not be far away from success.

The world’s largest tech companies (Google, Amazon, Microsoft, Facebook) have all stated that they are trying to build artificial general intelligence, or "AGI": a type of AI system that can do more-or-less all the tasks that a human can do.

Their ultimate goal is NOT to build AI products for you, but for your employer: AIs that can do your job faster, cheaper, and do so indefinitely. They want to replace you.

Experts are sounding the alarm

…and yet policymakers are asleep at the wheel.

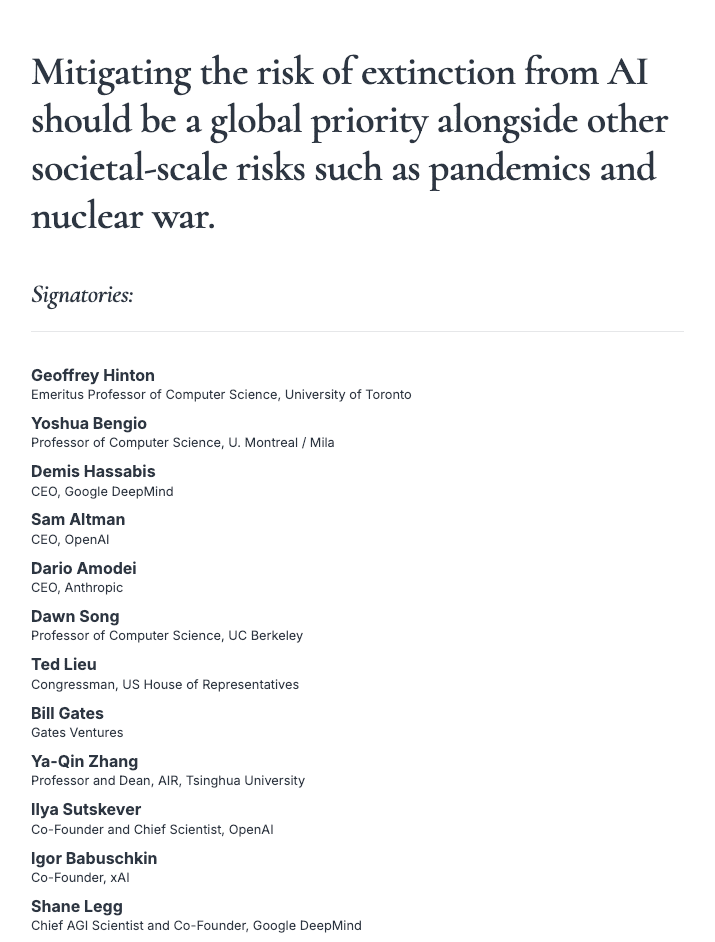

Thousands of experts have sounded the alarm about the massive risks of powerful AI systems — from bioweapons, to infrastructure attacks, to mass unemployment, and even extinction.

You don't have to take their word for it, though. Many of the CEOs leading these tech companies have publicly admitted these risks are real, too.

Despite these early warnings, policymakers have repeatedly surrendered to Big Tech lobbyists and refused to enact common-sense guard rails.

Join #TeamHuman.

Since 2015, people like you have helped us fight for a human future, one in which humanity as a whole is empowered by AI tools to fulfill its potential, rather than replaced by AI.

Here are just a few examples of how we've worked with people around the world to keep the future human:

- 33,000+ individuals signed the ‘Pause Giant AI Experiments’ open letter calling for a pause on the development of more powerful AI systems, including business leaders, community organizers, families, and concerned citizens. The letter sparked a global discussion on the topic of AI safety and ethics.

- Over 1,000+ people have participated in workshops, courses, and hackathons to build positive visions of the future with AI. These visions are shaping the narrative around which technologies we should build, and which we should not.

- The world’s religious communities are awakening to the potential benefits, and also the great risks, of powerful AI. They are making their voices heard, and providing moral leadership on the development of emerging technology.

- Thousands of people posted their stories on social media and signed open letters (including youth leaders, parents, academics, AI experts, and even former AI lab employees) to demonstrate their support when the fate of a 2024 California bill to protect people from AI risks was hanging in the balance.

- Over 100M+ people have watched and shared ‘Slaughterbots’, our viral video series on autonomous weapons that policymakers still refer to today.

Your voice matters; be ready to use it. Our Action Alerts enable you to take concrete action at exactly the moment it matters most.

We must keep control over the future of our world; We must stop the development of superhuman AI.

Receive action alerts

Join the fight and stay informed.

Receive invitations to take direct, meaningful action at the moment when it matters most.

Related Action

Subscribe to the FLI newsletter

Join 40,000+ other newsletter subscribers for monthly updates on the work we’re doing to prevent superhuman AI.

Start the conversation that matters.

Speak up, be heard. Tell your family and friends about the urgent need for action.

Most people have no idea that tech companies are building AIs to replace humans.

Use and customize these materials to help friends and family understand what's at stake.

Assets for social media

Use, modify, and share these materials in your DMs and feeds to spread the word.

Our plan to keep humans in control.

"Humanity is on the brink of developing artificial general intelligence that exceeds our own. It's time to close the gates on AGI and superintelligence... before we lose control of our future."

The latest essay from Anthony Aguirre, our Executive Director: How we got here, where AI is headed today, the risks this will pose, and a four-step plan to protect our human future.

Show them what AI is capable of today.

Did you know that AI can already create an audio deepfake of a person with just a few seconds of audio, generate life-like video of an imagined scene, convince people online that it is a real person, beat professional software developers at coding challenges, and more?

The demos below show some of the most shocking capabilities of AI today — and a glimpse into the near future. Share these with the people you know who might not have noticed what AI has learned to do since ChatGPT.

Our recommended reads

These are some of the best writings on AI from others who are trying to make sense of a world with AI, and steer it in a positive direction. They offer remarkable clarity and common-sense. We thoroughly recommend them for people who want to understand what's coming next in AI, and how we can chart a safe course.

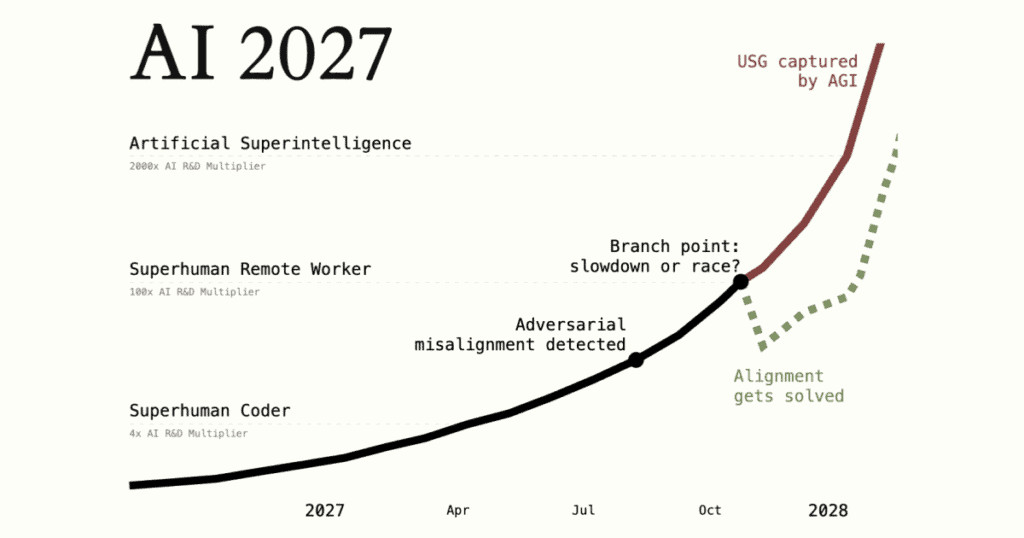

An in-depth prediction: What will happen if AI development continues on its current path?

A concrete plan for how to secure a safe and flourishing future for humans in an AI world.

How humanity risks extinction from powerful AI, where these risks come from, and how we can fix them.

Put pressure on companies to excel in safety.

We need to show AI companies that safety and security is a priority for customers and citizens. Let them know you’re watching by asking AI companies directly about their performance on the AI Safety Index, and share the Index with people who you know are frequent AI users.

Our AI Safety Index invites panels of AI experts to rank the leading AI companies based on their safety and security performance, across six domains.

Add your voice to the list of concerned citizens.

Individuals need to make their voices heard when it comes to matters of emerging technologies and their risks. We’ve facilitated this dialogue in the form of many open letters throughout the years. The letters below continue to gather signatures to this day — and they need your voice too.

Asilomar AI Principles

The Asilomar AI Principles, coordinated by FLI and developed at the Beneficial AI 2017 conference, are one of the earliest and most influential sets of AI governance principles.

11 August, 2017

Participate in our programs and initiatives

Take a look at what we’re working on at the moment and let us know if you’re interested in collaborating — or if you have any ideas, resources, or connections that could help us.

If you want to produce creative content on the topic of AI risks, we want to give you the funds you need to get started. Apply to our Digital Media Accelerator and share with us your best proposals.

Give to the cause.

We’ve hardly made a dent in our list of project ideas. Donations enable us to grow as an organisation and execute more of our plans.

Visit Our work to read more about the work we have done so far and the types of projects your donations would help support. Find out everything you need to know about donating on our dedicated page:

Help us improve this page

Did we miss something you need?

This webpage has been recently overhauled to provide more relevant and numerous opportunities for action.

Let us know if you have any feedback or comments. We're particularly eager to hear:

- If there is something you were looking for, but couldn't find.

- Ideas for resources you would find helpful.

- Things you especially did or didn't like about this page.

Sign up for the Future of Life Institute newsletter

Join 70,000+ others receiving periodic updates on our work and focus areas.

Steering transformative technology towards benefiting life and away from extreme large-scale risks.

Focus areas

© 2026 Future of Life Institute. All rights reserved.