tf.math.softplus | TensorFlow v2.16.1 (original) (raw)

tf.math.softplus

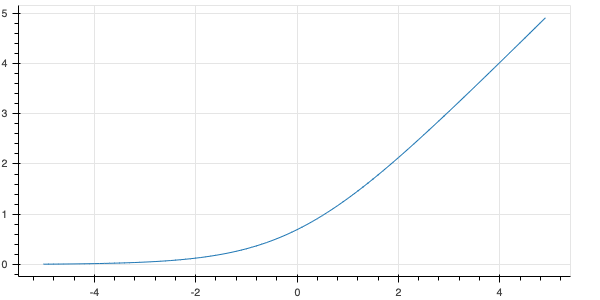

Computes elementwise softplus: softplus(x) = log(exp(x) + 1).

View aliases

Main aliases

tf.math.softplus(

features, name=None

)

Used in the notebooks

| Used in the guide | Used in the tutorials |

|---|---|

| Introduction to gradients and automatic differentiation | TFP Probabilistic Layers: Regression Gaussian Process Latent Variable Models Bayesian Modeling with Joint Distribution |

softplus is a smooth approximation of relu. Like relu, softplus always takes on positive values.

Example:

import tensorflow as tf

tf.math.softplus(tf.range(0, 2, dtype=tf.float32)).numpy()

array([0.6931472, 1.3132616], dtype=float32)

| Args | |

|---|---|

| features | Tensor |

| name | Optional: name to associate with this operation. |

| Returns |

|---|

| Tensor |

Except as otherwise noted, the content of this page is licensed under the Creative Commons Attribution 4.0 License, and code samples are licensed under the Apache 2.0 License. For details, see the Google Developers Site Policies. Java is a registered trademark of Oracle and/or its affiliates. Some content is licensed under the numpy license.

Last updated 2024-04-26 UTC.